What can be used to analyze scanned invoices and extract data, such as billing addresses and the total amount due?

Azure Al Search

Azure Al Document intelligence

Azure Al Custom Vision

Azure OpenAI

The correct answer is B. Azure AI Document Intelligence (formerly Form Recognizer).

This Azure service uses AI and OCR technologies to analyze and extract structured data from documents such as invoices, receipts, and purchase orders. It identifies key fields like billing address, invoice number, total amount due, and line items. The service supports prebuilt models for common document types and custom models for specialized layouts.

Option review:

A. Azure AI Search: Used for knowledge mining and semantic search, not document data extraction.

B. Azure AI Document Intelligence — ✅ Correct. Designed for form and invoice extraction.

C. Azure AI Custom Vision: Used for image classification and object detection, not text extraction.

D. Azure OpenAI: Generates or processes language but not structured document data.

Therefore, Azure AI Document Intelligence is the right service to extract data from scanned invoices.

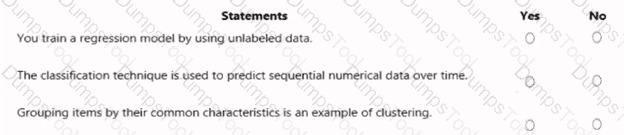

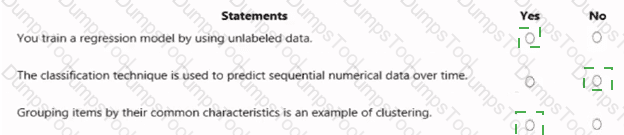

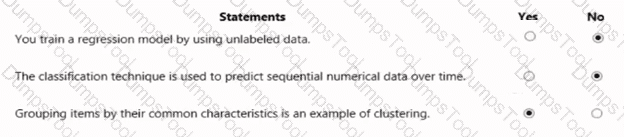

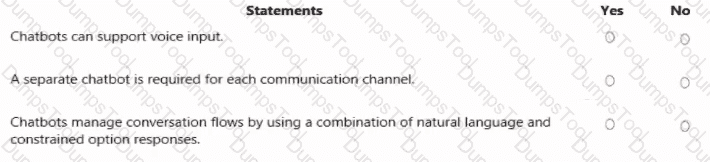

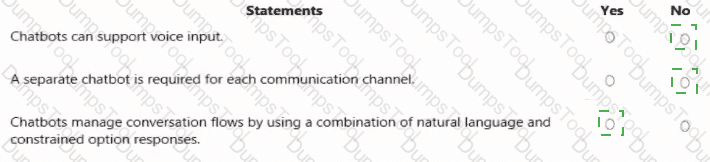

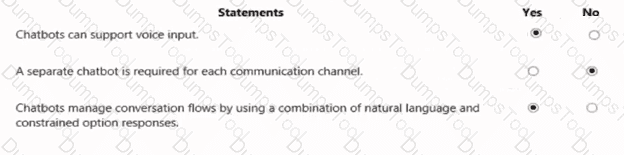

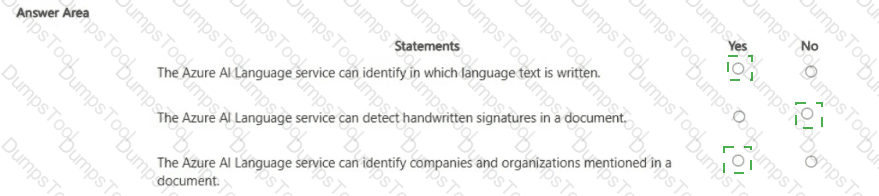

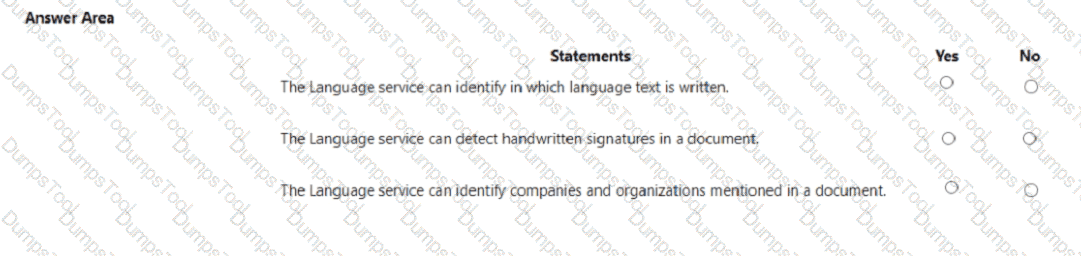

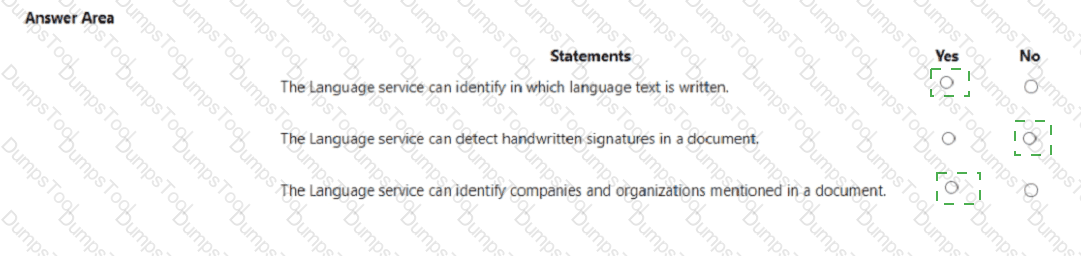

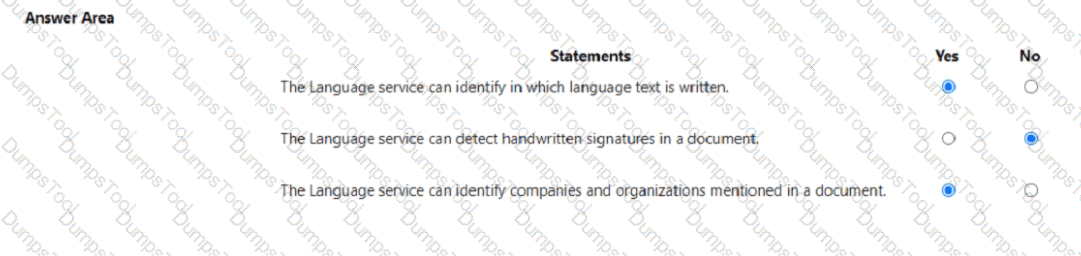

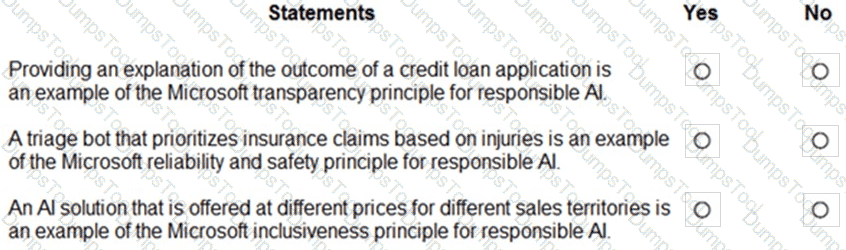

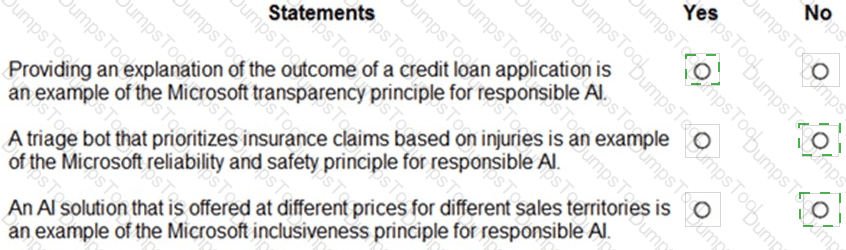

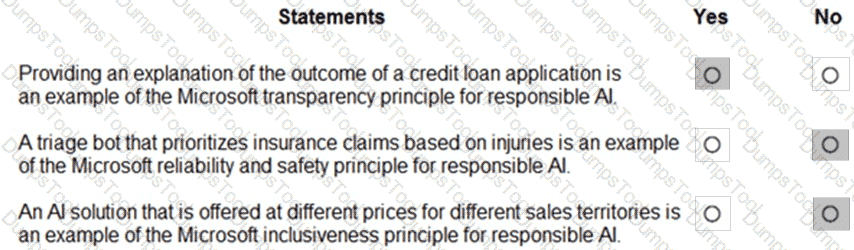

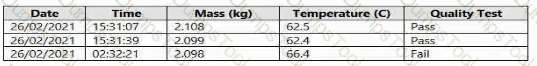

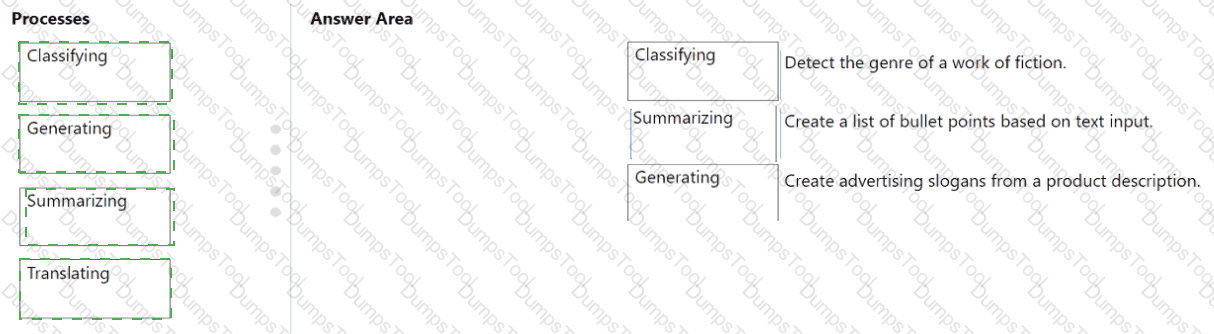

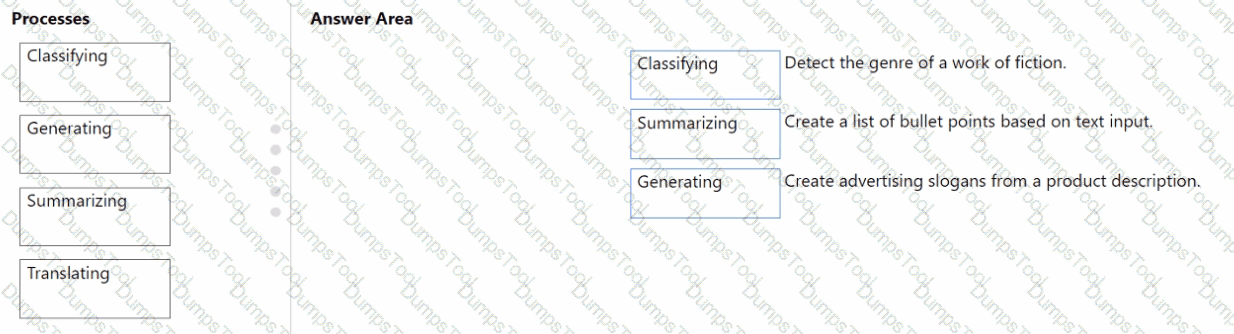

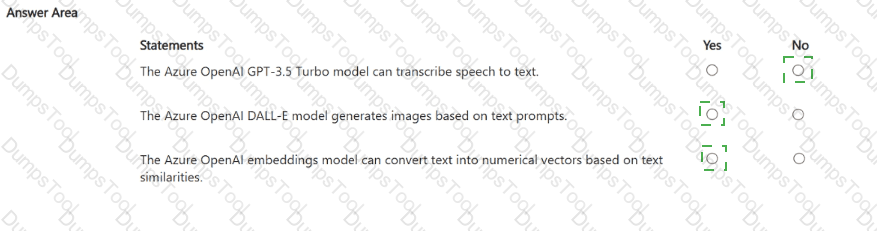

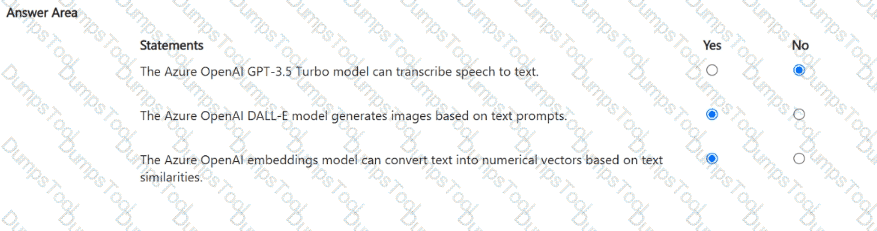

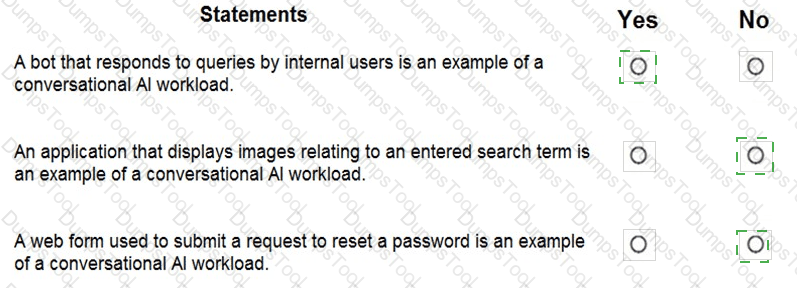

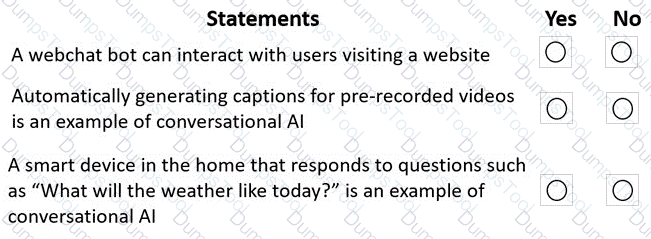

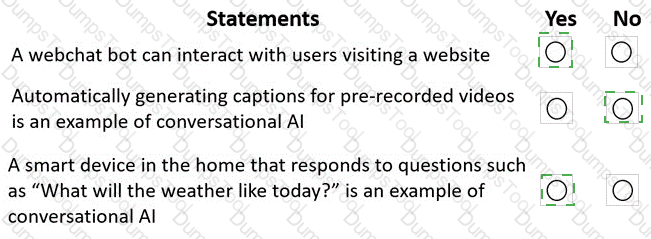

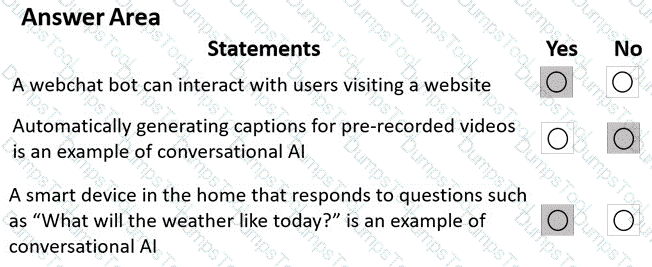

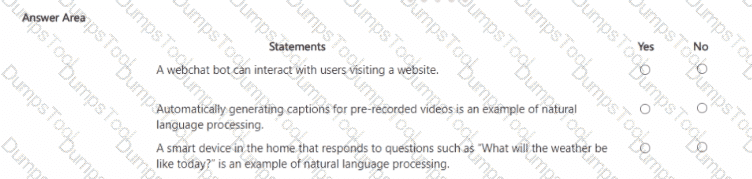

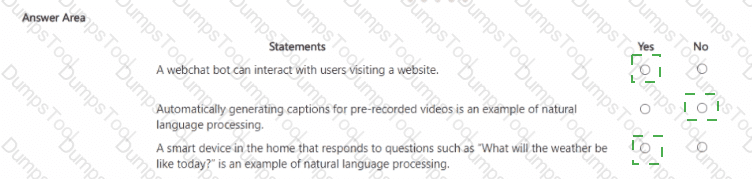

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

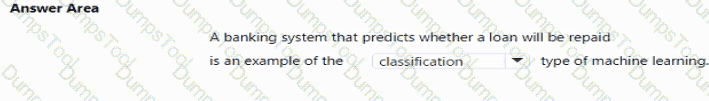

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and the Microsoft Learn module “Identify features of common machine learning types”, there are three main types of machine learning: supervised learning, unsupervised learning, and reinforcement learning. Within supervised learning, two common approaches are regression and classification, while clustering is a primary example of unsupervised learning.

“You train a regression model by using unlabeled data.” – No.Regression models are trained with labeled data, meaning the input data includes both features (independent variables) and target labels (dependent variables) representing continuous numerical values. Examples include predicting house prices or sales forecasts. Unlabeled data (data without target output values) cannot be used to train regression models; such data is used in unsupervised learning tasks like clustering.

“The classification technique is used to predict sequential numerical data over time.” – No.Classification is used for categorical predictions, where outputs belong to discrete classes, such as spam/not spam or disease present/absent. Predicting sequential numerical data over time refers to time series forecasting, which is typically a regression or forecasting problem, not classification. The AI-900 syllabus clearly separates classification (categorical prediction) from regression (continuous value prediction) and time series (temporal pattern analysis).

“Grouping items by their common characteristics is an example of clustering.” – Yes.This statement is correct. Clustering is an unsupervised learning technique used to group similar data points based on their features. The AI-900 study materials describe clustering as the process of “discovering natural groupings in data without predefined labels.” Common examples include customer segmentation or document grouping.

Therefore, based on Microsoft’s AI-900 training objectives and definitions:

Regression → supervised learning using labeled continuous data (No)

Classification → categorical prediction, not sequential numeric forecasting (No)

Clustering → grouping by similarity (Yes)

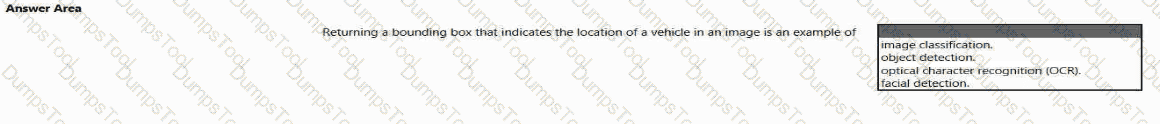

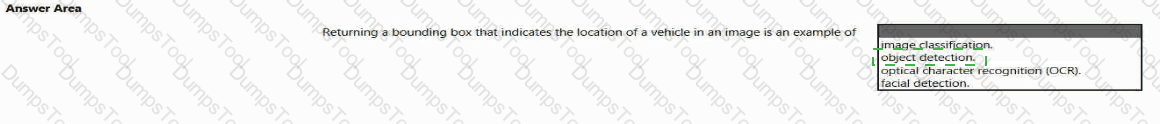

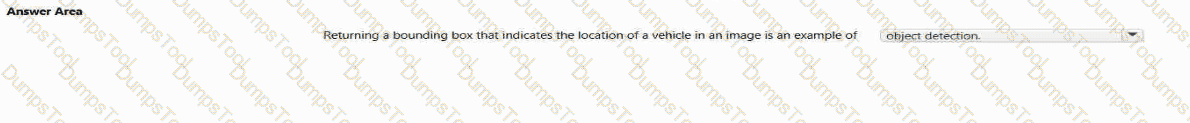

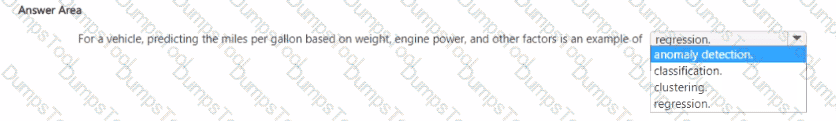

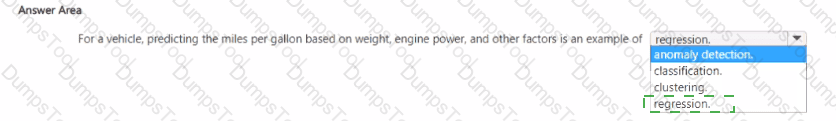

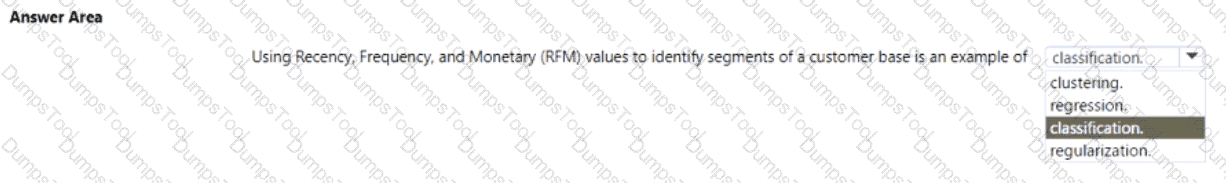

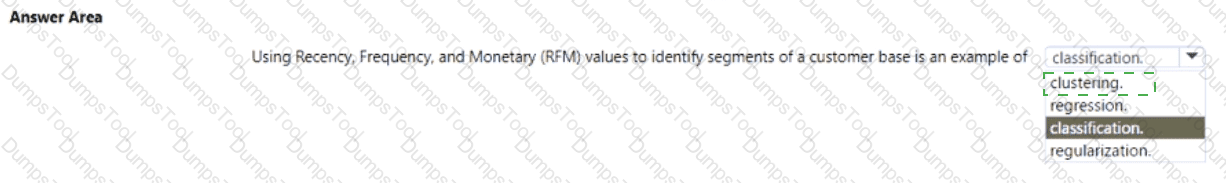

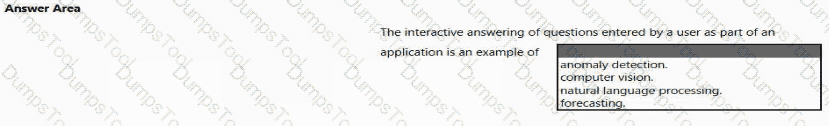

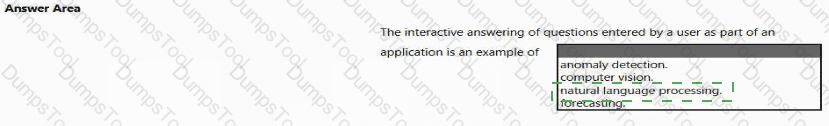

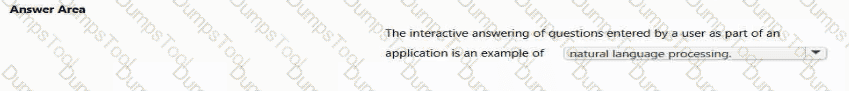

To complete the sentence, select the appropriate option in the answer area.

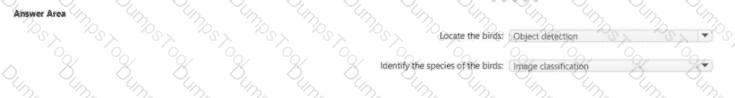

According to the Microsoft Azure AI Fundamentals (AI-900) official study materials, object detection is a type of computer vision workload that not only identifies objects within an image but also determines their location by drawing bounding boxes around them. This functionality is clearly described in the Microsoft Learn module “Identify features of computer vision workloads.”

In this scenario, the AI system analyzes an image to find a vehicle and then returns a bounding box showing where that vehicle is located within the image frame. That ability — to detect, classify, and localize multiple objects — perfectly defines object detection.

Microsoft’s study content contrasts object detection with other computer vision workloads as follows:

Image classification: Determines what object or scene is present in an image as a whole but does not locate it (e.g., “this is a car”).

Object detection: Identifies what objects are present and where they are, usually returning coordinates for bounding boxes (e.g., “car detected at position X, Y”).

Optical Character Recognition (OCR): Extracts text content from images or scanned documents.

Facial detection: Specifically locates human faces within an image or video feed, often as part of face recognition systems.

In Azure, object detection capabilities are available through services such as Azure Computer Vision, Custom Vision, and Azure Cognitive Services for Vision, which can be trained to detect vehicles, products, or other objects in various image datasets.

Therefore, based on the AI-900 study guide and Microsoft Learn materials, the verified and correct answer is Object detection, as it accurately describes the process of returning a bounding box indicating an object’s position in an image.

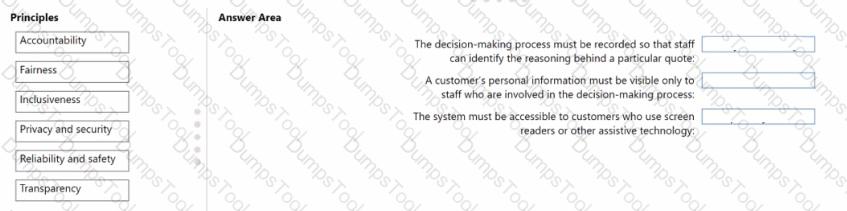

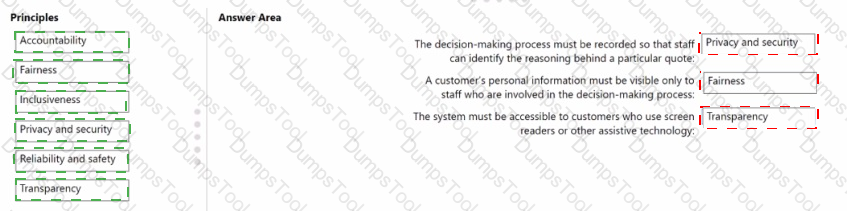

You are designing a system that will generate insurance quotes automatically.

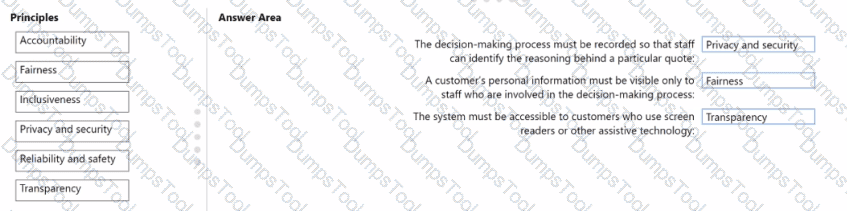

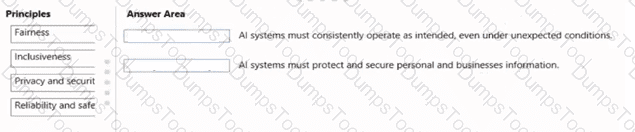

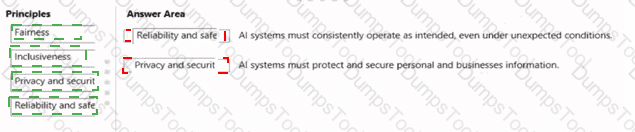

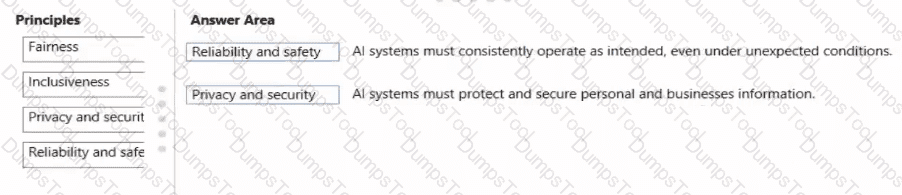

Match the Microsoft responsible Al principles to the appropriate requirements.

To answer, drag the appropriate principle from the column on the left to its requirement on the right Each principle may be used once, more than once, or not at all.

NOTE: Each correct match is worth one point.

Microsoft’s Responsible AI principles are the foundation for developing and deploying ethical and trustworthy AI systems. The six key principles are Fairness, Reliability and Safety, Privacy and Security, Inclusiveness, Transparency, and Accountability. Each principle guides specific practices for ensuring AI systems operate responsibly in real-world applications like automated insurance quoting systems.

Transparency – This principle ensures that the AI’s decisions can be understood and explained. Recording the decision-making process and enabling staff to trace how a quote was generated aligns with transparency. It allows stakeholders to interpret the reasoning behind model outputs, ensuring that the AI behaves predictably and ethically.

Privacy and Security – This principle focuses on protecting personal data and ensuring that sensitive information is handled responsibly. Limiting access to customer data only to authorized personnel maintains compliance with privacy laws (like GDPR) and safeguards against misuse. Microsoft emphasizes that AI systems should maintain strict control over data visibility and integrity.

Inclusiveness – This principle ensures that AI systems are accessible to all users, including people with disabilities. By supporting screen readers and assistive technologies, the system ensures equal access to information and services for every customer. Inclusiveness prevents discrimination and promotes accessibility, both of which are central to Microsoft’s Responsible AI strategy.

Thus, the correct mapping of principles is:

Decision process → Transparency

Personal information visibility → Privacy and Security

Accessibility via screen readers → Inclusiveness.

You have an Azure Machine Learning model that uses clinical data to predict whether a patient has a disease.

You clean and transform the clinical data.

You need to ensure that the accuracy of the model can be proven.

What should you do next?

Train the model by using the clinical data.

Split the clinical data into Two datasets.

Train the model by using automated machine learning (automated ML).

Validate the model by using the clinical data.

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn modules on machine learning concepts, ensuring that the accuracy of a predictive model can be proven requires data partitioning—specifically splitting the available data into training and testing datasets. This is a foundational concept in supervised machine learning.

When you split the data, typically about 70–80% of the dataset is used for training the model, while the remaining 20–30% is used for testing (or validation). The reason behind this approach is to ensure that the model’s performance metrics—such as accuracy, precision, recall, and F1-score—are evaluated on data the model has never seen before. This prevents overfitting and allows you to demonstrate that the model generalizes well to new, unseen data.

In the AI-900 Microsoft Learn content under “Describe the machine learning process”, it is explained that after cleaning and transforming the data, the next essential step is data splitting to “evaluate model performance objectively.” By keeping training and testing data separate, you can prove the reliability and accuracy of the model’s predictions, which is particularly crucial in sensitive domains like clinical or healthcare analytics, where decision transparency and validation are vital.

Option A (Train the model by using the clinical data) is incorrect because you should not train and evaluate on the same data—it would lead to biased results.

Option C (Train the model using automated ML) is incorrect because automated ML is a method for training and tuning, but it doesn’t inherently prove accuracy.

Option D (Validate the model by using the clinical data) is also incorrect if you use the same dataset for validation and training—it would not prove true accuracy.

Therefore, per Microsoft’s official AI-900 study content, the verified correct answer is B. Split the clinical data into two datasets.

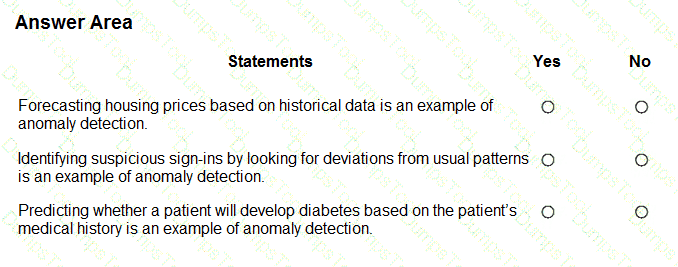

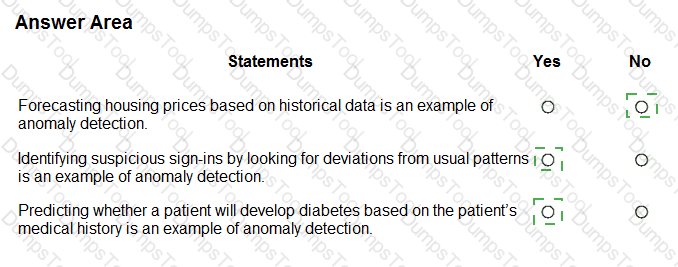

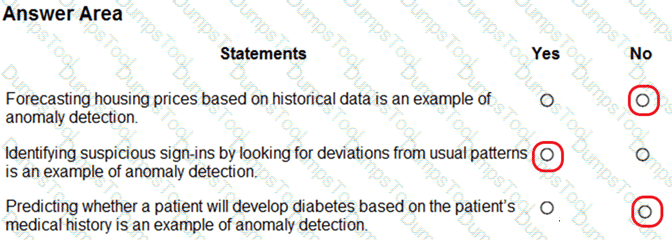

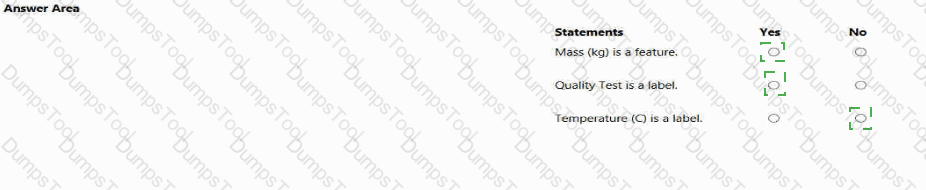

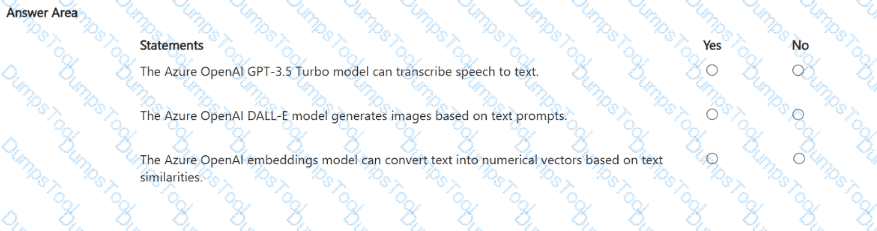

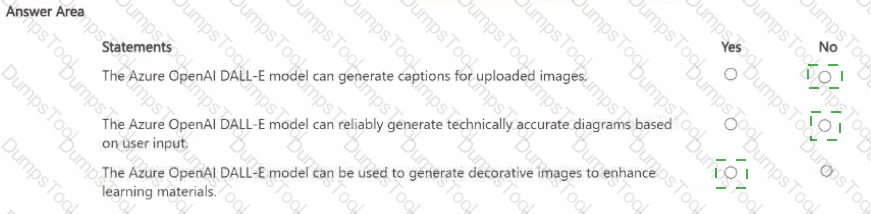

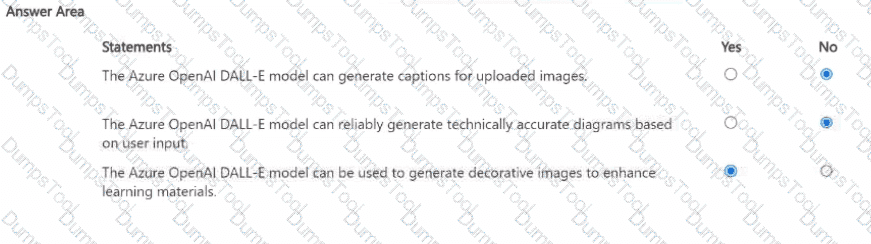

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

Box 1: No

Box 2: Yes

Box 3: Yes

Anomaly detection encompasses many important tasks in machine learning:

Identifying transactions that are potentially fraudulent.

Learning patterns that indicate that a network intrusion has occurred.

Finding abnormal clusters of patients.

Checking values entered into a system.

Which two resources can you use to analyze code and generate explanations of code function and code comments? Each correct answer presents a complete solution.

NOTE: Each correct answer is worth one point.

the Azure OpenAI DALL-E model

the Azure OpenAI Whisper model

the Azure OpenAI GPT-4 model

the GitHub Copilot service

The correct answers are C. the Azure OpenAI GPT-4 model and D. the GitHub Copilot service.

According to the Microsoft Azure AI Fundamentals (AI-900) curriculum and Microsoft Learn documentation on Azure OpenAI and GitHub Copilot, both GPT-4 and GitHub Copilot can be used to analyze and generate explanations for code functionality, as well as produce or refine code comments.

Azure OpenAI GPT-4 model (C):The GPT-4 model is a large language model (LLM) developed by OpenAI and available through the Azure OpenAI Service. It is trained on vast amounts of text, including programming languages, documentation, and natural language instructions. This enables it to interpret source code, explain what it does, suggest optimizations, and automatically generate detailed code comments. When prompted with code snippets, GPT-4 can provide structured natural language explanations describing the logic and intent of the code. In enterprise scenarios, developers use Azure OpenAI GPT models for code understanding, review automation, and documentation generation.

GitHub Copilot service (D):GitHub Copilot, powered by OpenAI Codex, is an AI coding assistant integrated into IDEs such as Visual Studio Code. It can analyze code context and generate inline comments and explanations in real time. GitHub Copilot understands the syntax and intent of numerous programming languages and provides intelligent suggestions or explanations directly in the developer’s environment.

The other options are not suitable:

A. DALL-E is a generative image model for creating visual content, not text or code analysis.

B. Whisper is an automatic speech recognition (ASR) model used for converting speech to text, unrelated to code interpretation.

Therefore, based on the official Azure AI and GitHub documentation, the correct and verified answers are C. Azure OpenAI GPT-4 model and D. GitHub Copilot service.

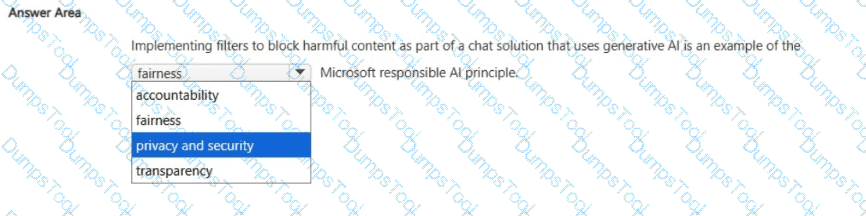

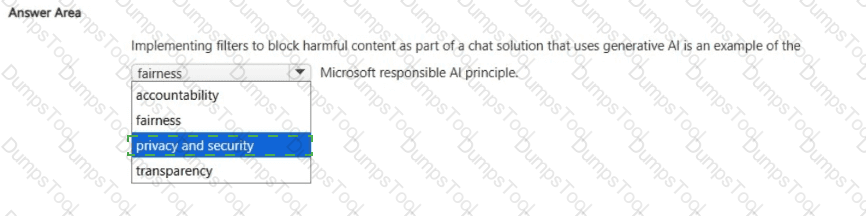

Select the answer that correctly completes the sentence.

Privacy and security.

According to Microsoft’s Responsible AI Principles, implementing filters to block harmful or inappropriate content in a Generative AI chat solution demonstrates a commitment to the Privacy and Security principle. This principle ensures that AI systems are designed and operated in a way that protects users, their data, and society from harm.

When a chat system uses Generative AI models (like Azure OpenAI’s GPT-based services), there is a risk that the model might produce unsafe, offensive, or sensitive content. Microsoft addresses this through content filters and safety systems, which automatically detect and block violent, hate-based, or sexually explicit outputs. This is part of responsible deployment practices to ensure that user interactions remain safe, private, and compliant with ethical standards.

Implementing these filters aligns with the Privacy and Security principle because it:

Protects users from exposure to harmful or abusive content.

Ensures that conversations are safeguarded against malicious or unsafe use.

Upholds user trust by maintaining a safe digital environment for all participants.

Let’s briefly clarify why the other options are incorrect:

Fairness deals with ensuring unbiased treatment and equitable outcomes in AI decisions.

Transparency focuses on explaining how AI systems make decisions.

Accountability refers to human oversight and responsibility for AI actions.

Thus, content filtering mechanisms are explicitly an example of Privacy and Security, as they protect users and data from harm or misuse while maintaining ethical AI behavior.

Therefore, the verified correct answer is Privacy and security.

Which Azure Al Document Intelligence prebuilt model should you use to extract parties and jurisdictions from a legal document?

contract

layout

general document

read

Within Azure AI Document Intelligence (formerly Form Recognizer), the Contract prebuilt model is designed to extract key information from legal and business contracts, including parties, jurisdictions, dates, and terms. According to Microsoft Learn, this prebuilt model identifies structured entities such as contracting parties, effective dates, governing jurisdictions, and termination clauses.

Layout (B) extracts text, tables, and structure but does not identify semantic information such as parties or jurisdictions.

General document (C) extracts key-value pairs and entities but lacks domain-specific contract analysis.

Read (D) performs OCR (optical character recognition) to extract raw text but not contextual metadata.

Thus, when the requirement is to extract parties and jurisdictions from a legal document, the Contract model is the correct Azure AI Document Intelligence choice.

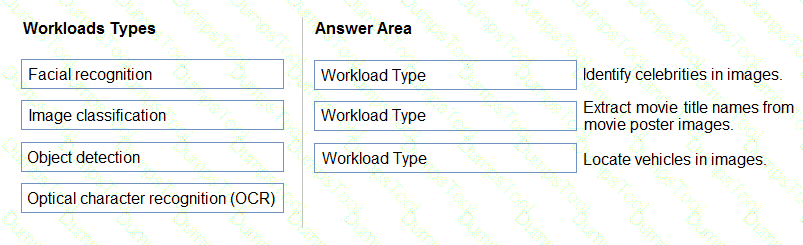

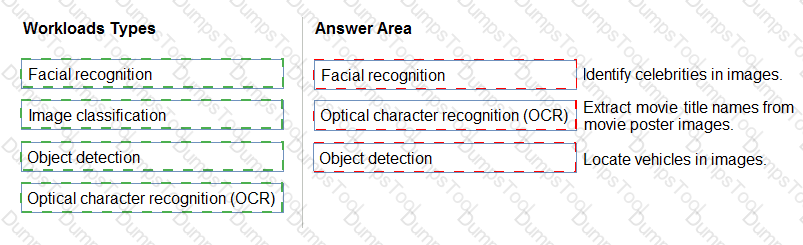

You need to build an app that will identify celebrities in images.

Which service should you use?

Azure OpenAI Service

Azure Machine Learning

conversational language understanding (CLU)

Azure Al Vision

According to the Microsoft Azure AI Fundamentals (AI-900) official learning path, the appropriate service for recognizing celebrities in images is Azure AI Vision (formerly Computer Vision). This service is part of Azure’s Cognitive Services suite and specializes in analyzing visual content using pretrained deep learning models. One of its built-in capabilities, as documented in Microsoft Learn: “Analyze images with Azure AI Vision”, includes object detection, face detection, and celebrity recognition.

The Azure AI Vision Analyze API can detect and identify thousands of objects, brands, and celebrities. When an image is submitted to the service, the model compares detected faces to a known database of public figures and returns metadata including celebrity names, confidence scores, and bounding box coordinates. This makes it ideal for applications that need to recognize well-known individuals automatically—such as media cataloging, content tagging, or entertainment apps.

The other options are incorrect:

A. Azure OpenAI Service provides generative AI and language models (like GPT-4), but it cannot analyze image content directly in the context of AI-900 fundamentals.

B. Azure Machine Learning is for custom model training and deployment, not a prebuilt vision recognition service.

C. Conversational Language Understanding (CLU) processes natural language input, not images.

Therefore, the correct service for identifying celebrities in images is D. Azure AI Vision.

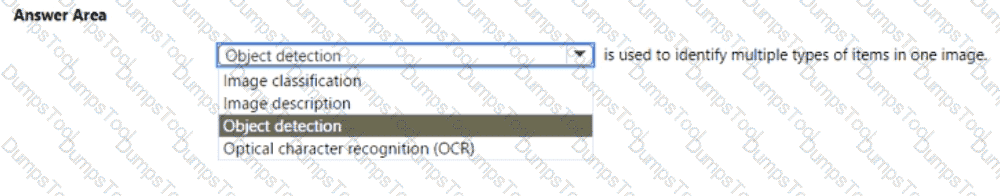

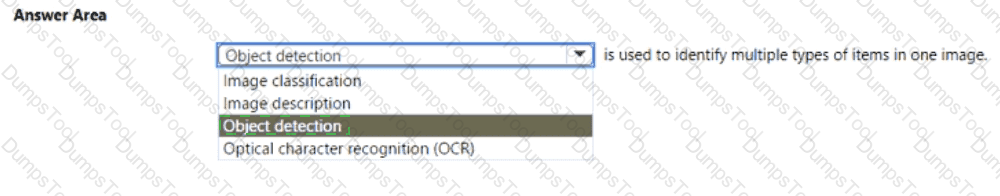

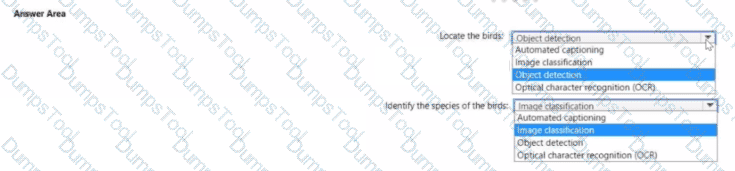

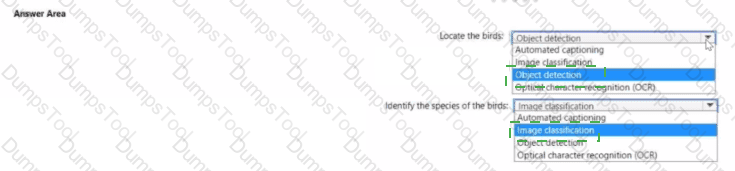

Select the answer that correctly completes the sentence

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn module “Identify features of Computer Vision workloads on Azure”, Object Detection is a specific computer vision capability used to identify and locate multiple types of objects within a single image. Unlike image classification, which assigns one label to an entire image, object detection identifies individual objects, their categories, and their positions using bounding boxes or polygons.

In practical terms, Object Detection combines two key outputs:

Classification – recognizing what the object is (for example, “car”, “person”, “dog”).

Localization – determining where the object appears in the image by drawing bounding boxes around it.

This technology is commonly used in scenarios such as traffic monitoring (detecting vehicles and pedestrians), retail shelf analysis (detecting products and inventory levels), and manufacturing quality control (identifying defective parts).

Microsoft’s Azure Cognitive Services – Custom Vision includes a dedicated Object Detection domain, which allows developers to train custom models to recognize multiple object types within a single image. The service uses deep learning techniques, particularly convolutional neural networks (CNNs), to process pixel patterns and spatial relationships for accurate detection.

For contrast:

Image Classification identifies only the overall category of an image (e.g., “This is a cat”).

Image Description generates captions summarizing the visual content (e.g., “A cat sitting on a couch”).

Optical Character Recognition (OCR) detects and extracts text from images, not physical objects.

Therefore, per the official AI-900 learning content and Azure documentation, when the goal is to identify multiple types of items within a single image, the correct AI workload is Object Detection.

You need to convert handwritten notes into digital text.

Which type of computer vision should you use?

optical character recognition (OCR)

object detection

image classification

facial detection

According to the Microsoft Azure AI Fundamentals (AI-900) study guide and Microsoft Learn documentation on Azure AI Vision, OCR is a computer vision technology that detects and extracts printed or handwritten text from images, scanned documents, or photographs. The OCR feature in Azure AI Vision can analyze images containing handwritten notes, recognize the characters, and convert them into machine-readable digital text.

This process is ideal for digitizing handwritten meeting notes, forms, or classroom materials. OCR works by identifying text regions in an image, segmenting characters or words, and then applying language models to interpret them correctly. Azure’s OCR capabilities support multiple languages and can handle varied handwriting styles.

Other options are incorrect because:

B. Object detection identifies and locates objects (like cars, animals, or furniture) within an image, not text.

C. Image classification assigns an image to a predefined category (e.g., “dog” or “cat”) rather than extracting text.

D. Facial detection detects or recognizes human faces, not written text.

Therefore, to convert handwritten notes into digital text, the correct computer vision technique is Optical Character Recognition (OCR).

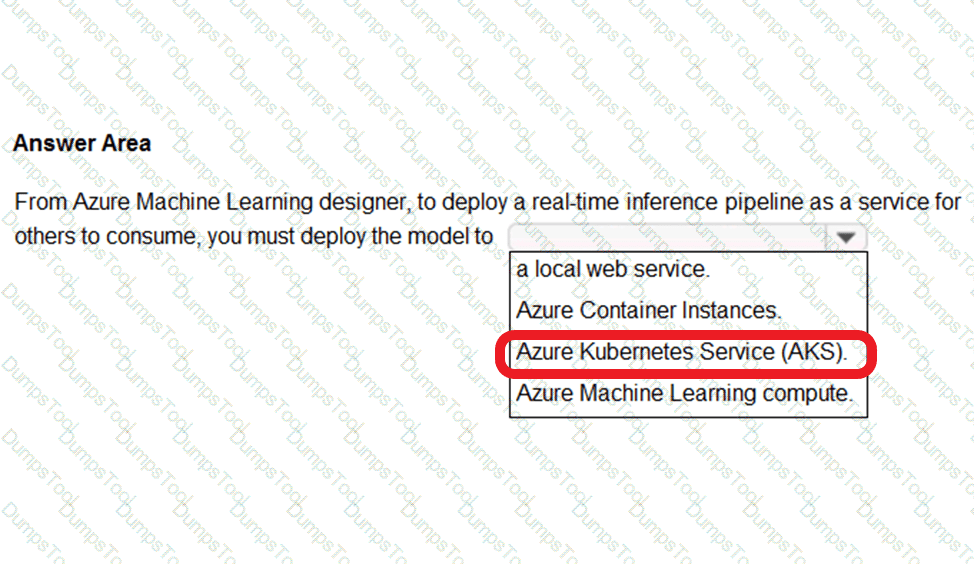

To complete the sentence, select the appropriate option in the answer area.

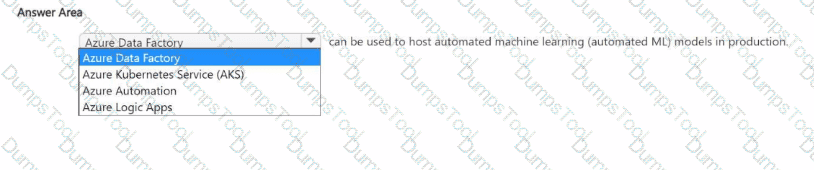

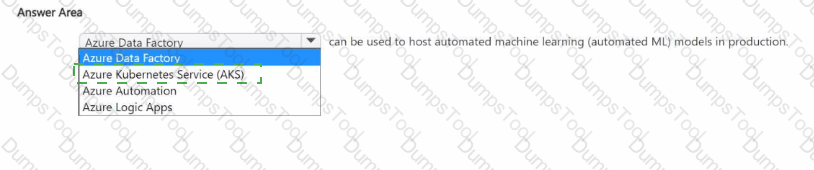

In the Microsoft Azure AI Fundamentals (AI-900) and Azure Machine Learning (AML) learning paths, deploying a real-time inference pipeline refers to making a trained machine learning model available as a web service that can process incoming data and return predictions instantly. To achieve this, the model must be deployed to an infrastructure capable of handling continuous, low-latency requests with high reliability and scalability.

Microsoft’s official guidance from Azure Machine Learning documentation specifies that:

For testing or development, you can deploy to Azure Container Instances (ACI) because it provides a lightweight, temporary environment suitable for small-scale or non-production workloads.

For production-grade, real-time inference, the deployment should be made to Azure Kubernetes Service (AKS).

AKS provides enterprise-level scalability, load balancing, and high availability, which are critical for serving real-time predictions to multiple consumers simultaneously. It manages containerized applications using Kubernetes orchestration, allowing the model to scale automatically based on traffic demands.

Azure Machine Learning Compute is mainly used for model training and batch inference pipelines, not real-time endpoints. A local web service is typically used only for debugging or offline testing on a developer machine and cannot be shared for external consumption.

Therefore, when deploying a real-time inference pipeline as a service for others to consume, the correct and Microsoft-verified option is Azure Kubernetes Service (AKS). This environment ensures production readiness, secure endpoint management, and scalability for live AI applications, fully aligning with best practices outlined in the Azure Machine Learning designer documentation and AI-900 exam objectives.

https://docs.microsoft.com/en-us/azure/machine-learning/concept-designer#deploy

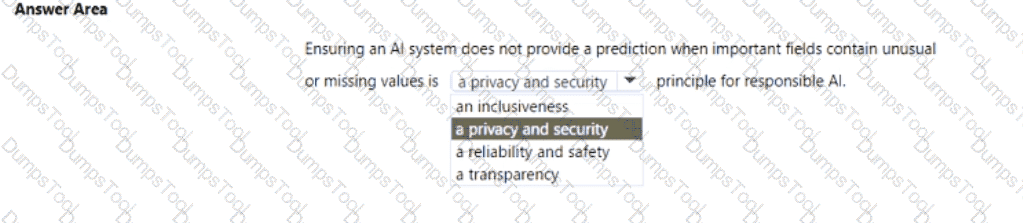

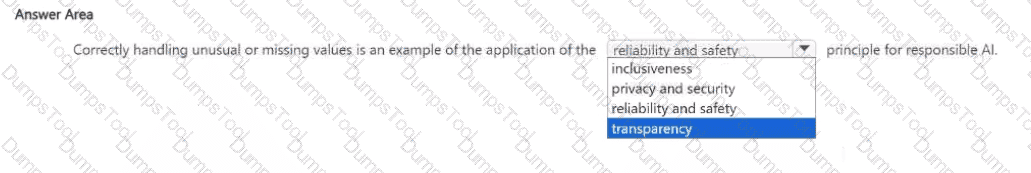

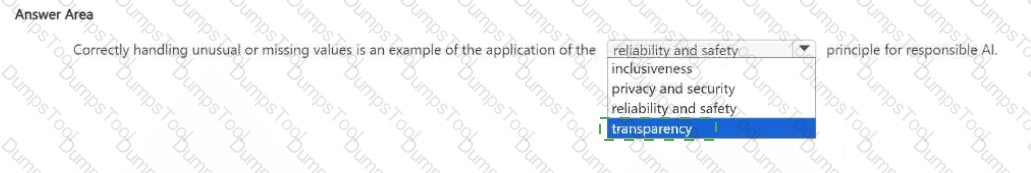

Select the answer that correctly completes the sentence

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft’s Responsible AI Framework, the Reliability and Safety principle ensures that AI systems operate consistently, accurately, and as intended, even when confronted with unexpected data or edge cases. It emphasizes that AI systems must be tested, validated, and monitored to ensure stable performance and to prevent harm caused by inaccurate or unreliable outputs.

In the given scenario, the AI system is designed not to provide predictions when key fields contain unusual or missing values. This approach demonstrates that the system is built to avoid unreliable or unsafe outputs that could result from incomplete or corrupted data. Microsoft explicitly outlines that reliable AI systems must handle data anomalies and input validation properly to prevent incorrect predictions.

Here’s how the other options differ:

Inclusiveness ensures accessibility for all users, including those with disabilities or from different backgrounds. It’s unrelated to prediction control or data reliability.

Privacy and Security protects sensitive data and ensures proper handling of personal information, not system prediction logic.

Transparency ensures that users understand how an AI system makes its decisions but doesn’t address prediction reliability.

Thus, stopping a prediction when data is incomplete or abnormal directly supports the Reliability and Safety principle — it ensures that the AI model functions correctly under valid conditions and avoids unintended or harmful outcomes.

This principle aligns with Microsoft’s Responsible AI guidance, which highlights that AI solutions must “operate reliably and safely, even under unexpected conditions, to protect users and maintain trust.”

You need to provide content for a business chatbot that will help answer simple user queries.

What are three ways to create question and answer text by using Azure Al Language Service ' s question answering? Each correct answer presents a complete solution.

NOTE: Each correct and ask questions by selection is worth one point.

Connect the bot to the Cortana channel using Cortana.

Import chit-chat content from a predefined data source.

Manually enter the questions and answers.

Use Azure Machine Learning Automated ML to train a model based on a file that contains question and answer pairs.

Generate the questions and answers from an existing webpage.

The correct answers are B. Import chit-chat content from a predefined data source, C. Manually enter the questions and answers, and E. Generate the questions and answers from an existing webpage.

According to Microsoft Learn and the Azure AI Fundamentals (AI-900) study guide, the Question Answering feature of the Azure AI Language Service (formerly part of QnA Maker) allows developers to create a knowledge base (KB) that enables a chatbot to answer common questions automatically. This knowledge base can be built in three main ways:

Import chit-chat content (B):Azure provides predefined chit-chat datasets that can be imported to make a bot more conversational and natural. This includes small talk such as greetings, acknowledgments, and polite responses (for example, “How are you?” → “I’m doing great, thanks!”). Importing this content enriches the bot’s personality and improves user engagement.

Manually enter questions and answers (C):Developers can manually add pairs of questions and answers directly into the question answering knowledge base. This approach is suitable for custom FAQs or domain-specific content. It gives complete control over how each question is phrased and what answer is returned, ensuring high precision and clarity.

Generate questions and answers from an existing webpage (E):Azure AI Language can automatically extract Q & A pairs from a website’s FAQ or support page. This is done by providing the webpage URL to the service, which scans the page and builds a knowledge base from the detected questions and corresponding answers.

The other options are incorrect:

A (Cortana channel) relates to bot deployment, not knowledge creation.

D (Automated ML) is used for predictive modeling, not for building Q & A datasets.

Thus, the verified correct answers are B, C, and E.

For a machine learning progress, how should you split data for training and evaluation?

Use features for training and labels for evaluation.

Randomly split the data into rows for training and rows for evaluation.

Use labels for training and features for evaluation.

Randomly split the data into columns for training and columns for evaluation.

https://docs.microsoft.com/en-us/azure/machine-learning/algorithm-module-reference/split-data

The correct answer is B. Randomly split the data into rows for training and rows for evaluation.

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and the Microsoft Learn module “Describe fundamental principles of machine learning on Azure”, the process of developing a machine learning model involves dividing the available dataset into two or more parts—commonly training data and evaluation (or testing) data. The goal is to ensure that the model can learn patterns from one subset of the data (training set) and then be objectively tested on unseen data (evaluation set) to measure how well it generalizes to new situations.

The training dataset contains both features (the measurable inputs) and labels (the target outputs). The model learns from the patterns and relationships between these features and labels. The evaluation dataset also contains features and labels, but it is kept separate during the training phase. Once the model has been trained, it is tested on this unseen evaluation data to calculate metrics like accuracy, precision, recall, or F1 score.

Microsoft emphasizes that the data split should be random and based on rows, not columns. Each row represents a complete observation (for example, one customer record, one transaction, or one image). Randomly splitting ensures that both subsets represent the same distribution of data, avoiding bias. Splitting by columns would separate features themselves, which would make the model training invalid.

The AI-900 materials often illustrate this using Azure Machine Learning’s data preparation workflow, where data is randomly divided (commonly 70% for training and 30% for testing). This ensures the model learns from diverse examples and is fairly evaluated.

Therefore, the verified and correct approach, as per Microsoft’s official guidance, is B. Randomly split the data into rows for training and rows for evaluation.

When training a model, why should you randomly split the rows into separate subsets?

to train the model twice to attain better accuracy

to train multiple models simultaneously to attain better performance

to test the model by using data that was not used to train the model

When training a machine learning model, it is standard practice to randomly split the dataset into training and testing subsets. The purpose of this is to evaluate how well the model generalizes to unseen data. According to the AI-900 study guide and Microsoft Learn module “Split data for training and evaluation”, this ensures that the model is trained on one portion of the data (training set) and evaluated on another (test or validation set).

The correct answer is C. to test the model by using data that was not used to train the model.

Random splitting prevents data leakage and overfitting, which occur when a model memorizes patterns from the training data instead of learning generalizable relationships. By testing on unseen data, developers can assess true performance, ensuring that predictions will be accurate on future, real-world data.

Options A and B are incorrect because:

A. Train the model twice does not improve accuracy; model accuracy depends on data quality, feature engineering, and algorithm choice.

B. Train multiple models simultaneously refers to model comparison, not the purpose of splitting data.

Thus, the correct reasoning is that random splitting provides a reliable estimate of the model’s predictive power on new data.

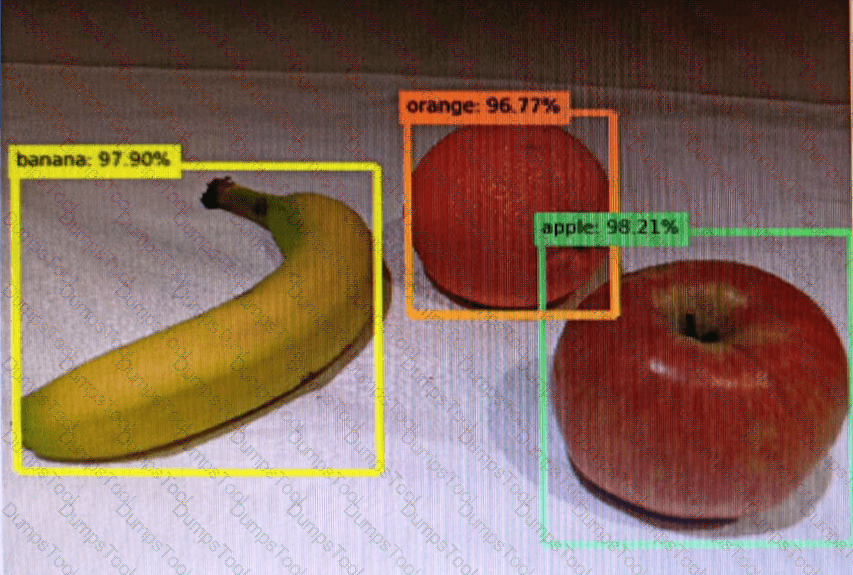

You send an image to a Computer Vision API and receive back the annotated image shown in the exhibit.

Which type of computer vision was used?

object detection

semantic segmentation

optical character recognition (OCR)

image classification

Object detection is similar to tagging, but the API returns the bounding box coordinates (in pixels) for each object found. For example, if an image contains a dog, cat and person, the Detect operation will list those objects together with their coordinates in the image. You can use this functionality to process the relationships between the objects in an image. It also lets you determine whether there are multiple instances of the same tag in an image.

The Detect API applies tags based on the objects or living things identified in the image. There is currently no formal relationship between the tagging taxonomy and the object detection taxonomy. At a conceptual level, the Detect API only finds objects and living things, while the Tag API can also include contextual terms like " indoor " , which can ' t be localized with bounding boxes.

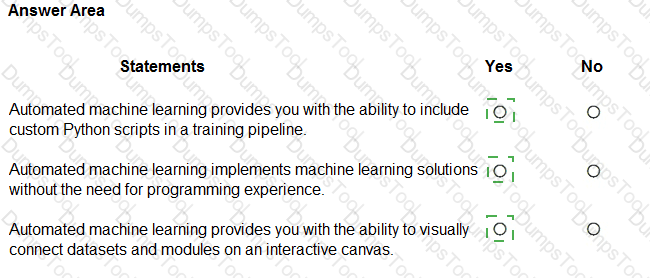

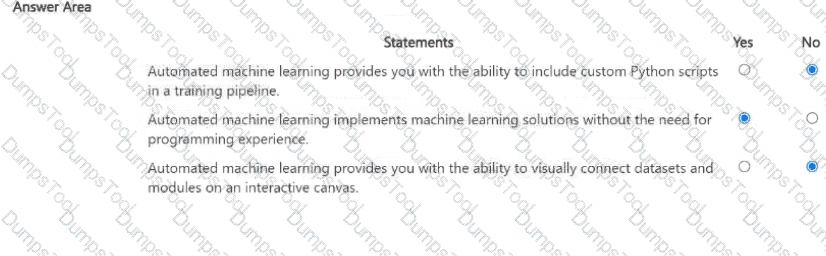

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

According to the Microsoft Azure AI Fundamentals (AI-900) study guide and Azure Machine Learning documentation, Automated Machine Learning (AutoML) is a feature designed to help users build, train, and tune machine learning models automatically without requiring deep knowledge of programming or data science.

First Statement: “Automated machine learning provides you with the ability to include custom Python scripts in a training pipeline.”This is False (No). AutoML automates the model selection and tuning process but does not allow the inclusion of custom Python scripts within its workflow. Custom Python integration is supported in Azure Machine Learning designer pipelines or SDK-based training, not in AutoML.

Second Statement: “Automated machine learning implements machine learning solutions without the need for programming experience.”This is True (Yes). One of AutoML’s core benefits is that it enables non-programmers to train and evaluate models by simply selecting data, choosing a target column, and letting Azure automatically test algorithms and hyperparameters. This aligns with Microsoft’s AI-900 objective to democratize AI development.

Third Statement: “Automated machine learning provides you with the ability to visually connect datasets and modules on an interactive canvas.”This is False (No). That feature belongs to Azure Machine Learning Designer, not AutoML. The designer offers a drag-and-drop visual interface for connecting datasets and modules, whereas AutoML provides a wizard-driven approach focused on automation.

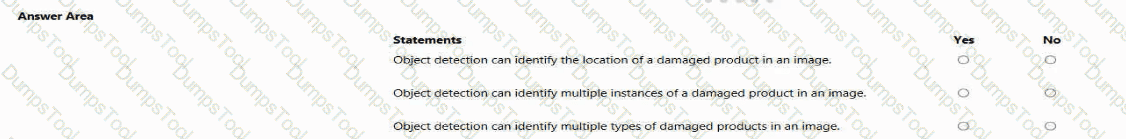

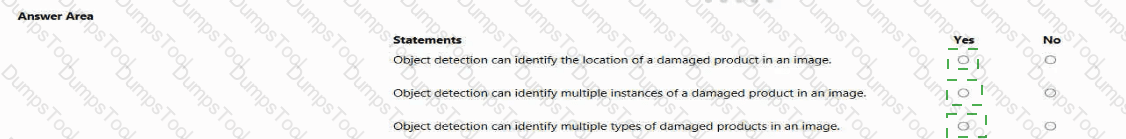

For each of the following statements, select Yes If the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

Location of a damaged product → Yes

Multiple instances of the same product → Yes

Multiple types of damaged products → Yes

All three statements are Yes, because they correctly describe the capabilities of object detection, one of the major workloads in computer vision, as defined in the Microsoft Azure AI Fundamentals (AI-900) study guide and Microsoft Learn module: “Describe features of computer vision workloads on Azure.”

Object detection is an advanced computer vision technique that allows AI systems not only to classify objects within an image but also to locate them by drawing bounding boxes around each detected object. This differentiates it from simple image classification, which only identifies what objects exist in an image without specifying their locations.

Identifying the location of a damaged product – YesAccording to Microsoft Learn, object detection can return the coordinates or bounding boxes for recognized objects. Therefore, if the model is trained to detect damaged products, it can pinpoint exactly where those defects appear within an image.

Identifying multiple instances of a damaged product – YesObject detection models can detect multiple objects of the same class in one image. For instance, if an image contains several damaged products, each will be identified and marked individually. This feature supports tasks such as automated quality inspection in manufacturing, where several defective units may appear simultaneously.

Identifying multiple types of damaged products – YesObject detection can also distinguish different classes of objects. When trained on multiple labels (e.g., cracked, scratched, or broken items), the model can detect and classify each type of damage in one image.

In Microsoft’s AI-900 framework, object detection is presented as a critical part of computer vision workloads capable of locating and classifying multiple objects and categories within visual content.

What is a form of unsupervised machine learning?

multiclass classification

clustering

binary classification

regression

As outlined in the AI-900 study guide and Microsoft Learn’s “Explore fundamental principles of machine learning” module, clustering is a core example of unsupervised machine learning.

In unsupervised learning, the model is trained on data without labeled outcomes. The goal is to discover patterns or groupings naturally present in the data. Clustering algorithms, such as K-means, DBSCAN, or Hierarchical clustering, analyze similarities among data points and group them into clusters. For example, clustering can group customers by purchasing behavior or segment products by shared characteristics — all without predefined labels.

Supervised learning, by contrast, uses labeled data (input-output pairs) to train a model that predicts outcomes. This includes:

A. Multiclass classification – Predicts more than two categories (e.g., classifying images as dog, cat, or bird).

C. Binary classification – Predicts two categories (e.g., spam vs. not spam).

D. Regression – Predicts continuous numeric values (e.g., price prediction).

Therefore, the only option representing unsupervised learning is clustering, which enables data discovery without predefined labels.

Which Azure Cognitive Services service can be used to identify documents that contain sensitive information?

Custom Vision

Conversational Language Understanding

Form Recognizer

According to the Microsoft Azure AI Fundamentals (AI-900) official study materials and Microsoft Learn module “Identify features of common AI workloads,” the Azure Form Recognizer service is part of Azure Cognitive Services for Document Intelligence. It enables organizations to extract, analyze, and identify information from structured and unstructured documents, including sensitive or confidential data such as names, addresses, financial figures, and identification numbers.

Form Recognizer uses optical character recognition (OCR) combined with machine learning to automatically extract key-value pairs, tables, and text fields from documents like invoices, receipts, contracts, and forms. It can be customized to identify and classify documents that contain specific sensitive data, allowing businesses to automate compliance and data governance tasks.

By contrast:

A. Custom Vision is used for image classification and object detection — it analyzes visual data, not document content.

B. Conversational Language Understanding (formerly LUIS) identifies intent and entities in text conversations, not document structure or sensitive data.

Form Recognizer is explicitly mentioned in the AI-900 course as the tool for document analysis and extraction. It can even integrate with Azure Cognitive Search or Azure Purview for further data management and compliance workflows.

Therefore, the verified and correct answer, aligned with Microsoft’s official training content, is C. Form Recognizer, as it is the Azure Cognitive Service capable of identifying and processing documents containing sensitive information.

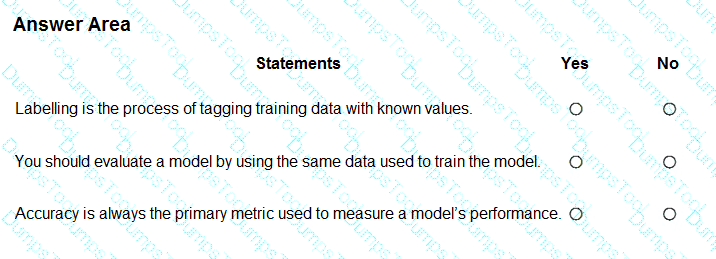

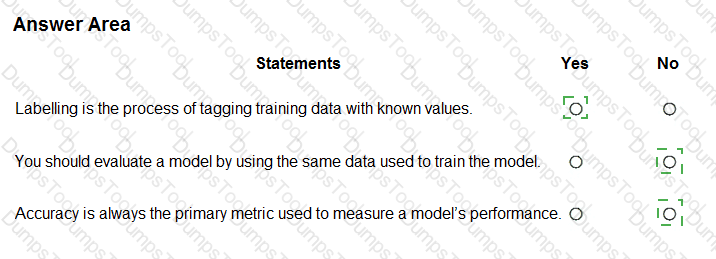

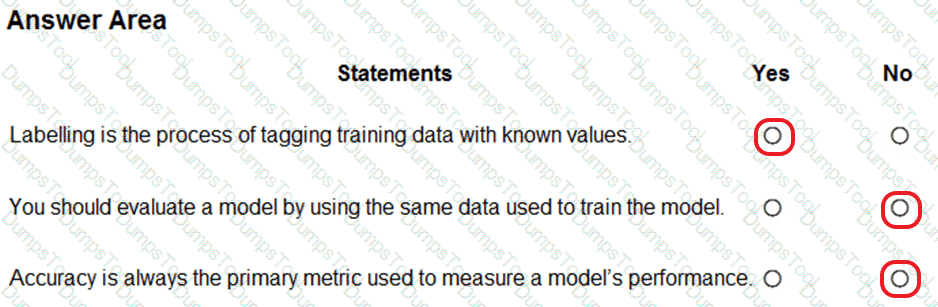

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

This question evaluates understanding of fundamental machine learning concepts as covered in the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn module “Explore the machine learning process.” These statements relate to data labeling, model evaluation practices, and performance metrics—three essential parts of building and assessing a machine learning model.

Labelling is the process of tagging training data with known values → YesAccording to Microsoft Learn, “Labeling is the process of tagging data with the correct output value so the model can learn relationships between inputs and outputs.” This is essential for supervised learning, where models require historical data with known outcomes. For example, if training a model to recognize fruit images, each image is labeled as “apple,” “banana,” or “orange.” Hence, this statement is true.

You should evaluate a model by using the same data used to train the model → NoThe AI-900 guide stresses that using the same data for both training and evaluation can cause overfitting, where the model performs well on training data but poorly on unseen data. Instead, the dataset is split into training and testing (or validation) subsets. Evaluation must use test data that the model has never seen before to ensure an unbiased measure of performance. Therefore, this statement is false.

Accuracy is always the primary metric used to measure a model’s performance → NoMicrosoft Learn emphasizes that accuracy is only one metric and not always the best choice. Depending on the problem type, other metrics such as precision, recall, F1-score, or AUC (Area Under the Curve) may be more appropriate—especially in cases with imbalanced datasets. For example, in fraud detection, recall may be more important than accuracy. Thus, this statement is false.

Which action can be performed by using the Azure Al Vision service?

identifying breeds of animals in live video streams

extracting key phrases from documents

extracting data from handwritten letters

creating thumbnails for training videos

The Azure AI Vision service (formerly Computer Vision) is designed to analyze visual content in images and videos. According to Microsoft Learn’s “Describe features of computer vision workloads,” Azure AI Vision can identify objects, people, text, and scenes, and even classify images or detect objects in real time.

Identifying breeds of animals in live video streams is an example of image classification or object detection—core capabilities of Azure AI Vision. The Vision service can analyze each frame in a video, recognize animals, and classify them according to known categories, making this the correct answer.

The other options are incorrect:

B. Extracting key phrases from documents → Done by Azure AI Language (Text Analytics).

C. Extracting data from handwritten letters → Done by Azure AI Document Intelligence (Form Recognizer) using OCR.

D. Creating thumbnails for training videos → While possible in Azure Media Services, it’s not a primary Azure AI Vision function.

Thus, the best answer is A. Identifying breeds of animals in live video streams.

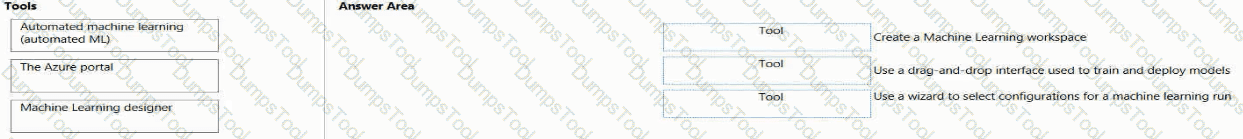

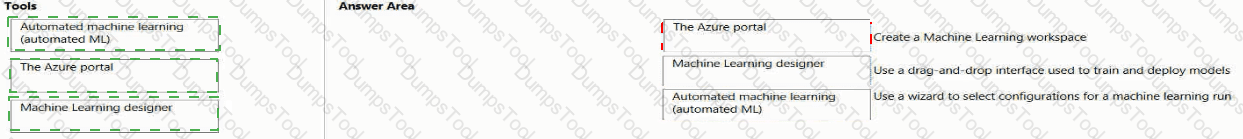

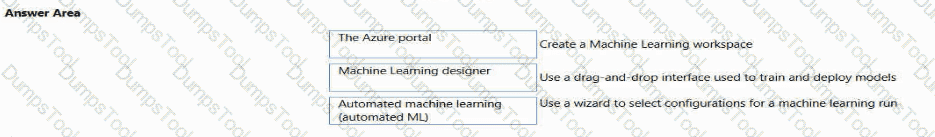

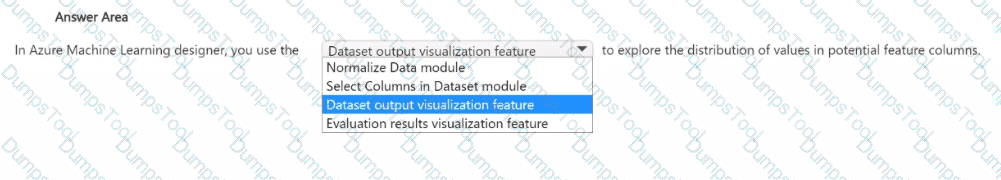

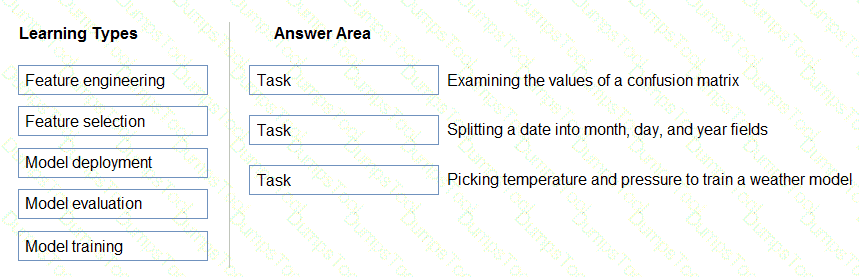

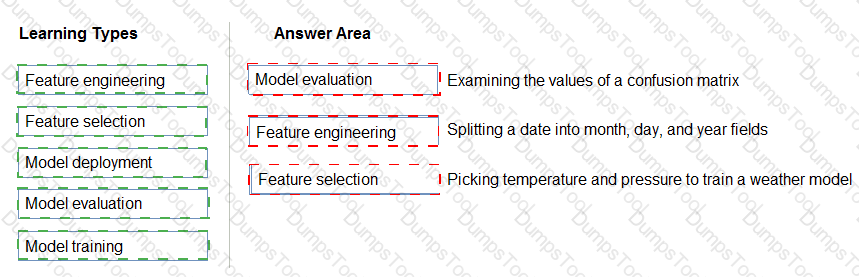

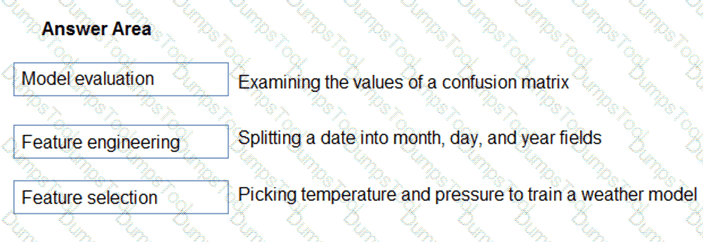

Match the tool to the Azure Machine Learning task.

To answer, drag the appropriate tool from the column on the left to its tasks on the right. Each tool may be used once, more than once, or not at all

NOTE: Each correct match is worth one point.

The correct matching aligns directly with the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn modules under “Identify features of Azure Machine Learning”. Azure Machine Learning provides a suite of tools that serve different functions within the model development lifecycle — from creating workspaces, to training models, to automating experimentation.

The Azure portal → Create a Machine Learning workspace.The Azure portal is a web-based graphical interface for managing all Azure resources. According to Microsoft Learn, you use the portal to create and configure the Azure Machine Learning workspace, which acts as the central environment where datasets, experiments, models, and compute resources are organized. Creating a workspace through the portal involves specifying a subscription, resource group, and region — tasks that are part of the setup stage rather than model development.

Machine Learning designer → Use a drag-and-drop interface used to train and deploy models.The Machine Learning designer (formerly “Azure ML Studio (classic)”) provides a visual, no-code/low-code interface for building, training, and deploying machine learning pipelines. The designer uses a drag-and-drop workflow where users connect modules representing data transformations, model training, and evaluation. This tool is ideal for beginners and those who want to quickly experiment with machine learning concepts without writing code.

Automated machine learning (Automated ML) → Use a wizard to select configurations for a machine learning run.Automated ML simplifies model creation by automatically selecting algorithms, hyperparameters, and data preprocessing options. Users interact through a guided wizard (within the Azure Machine Learning studio) that walks them through configuration steps such as selecting datasets, target columns, and performance metrics. The system then iteratively trains and evaluates multiple models to recommend the best-performing one.

Together, these tools streamline the machine learning workflow:

Azure portal for setup and resource management,

Machine Learning designer for visual model creation, and

Automated ML for guided, automated model selection and tuning.

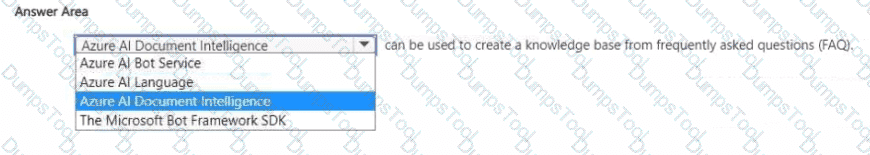

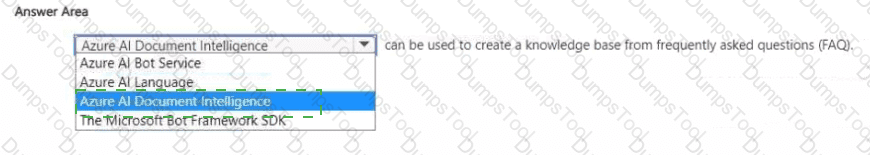

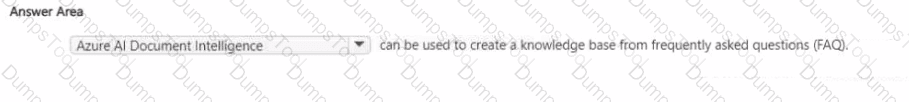

Select the answer that correctly completes the sentence.

The correct answer is Azure AI Language, which includes the Question Answering capability (previously known as QnA Maker). According to the Microsoft Azure AI Fundamentals (AI-900) study guide and Microsoft Learn documentation, the Azure AI Language service can be used to create a knowledge base from frequently asked questions (FAQ) and other structured or semi-structured text sources.

This service allows developers to build intelligent applications that can understand and respond to user questions in natural language by referencing prebuilt or custom knowledge bases. The Question Answering feature extracts pairs of questions and answers from documents, websites, or manually entered data and uses them to construct a searchable knowledge base. This knowledge base can then be integrated with Azure Bot Service or other conversational platforms to create interactive, self-service chatbots.

Here’s how it works:

Developers upload FAQ documents, URLs, or structured content.

Azure AI Language processes the content and identifies logical question-answer pairs.

The model stores these pairs in a knowledge base that can be queried by user input.

When users ask questions, the model finds the best matching answer using natural language understanding techniques.

In contrast:

Azure AI Document Intelligence (Form Recognizer) is used to extract structured data from forms and documents, not to create FAQ knowledge bases.

Azure AI Bot Service is for managing and deploying conversational bots but does not generate knowledge bases.

Microsoft Bot Framework SDK provides tools for building conversational logic but still requires a knowledge source like Question Answering from Azure AI Language.

Therefore, the service that can create a knowledge base from FAQ content is Azure AI Language.

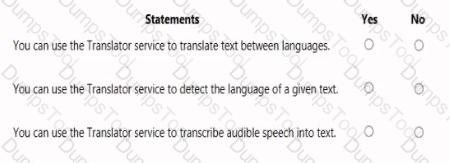

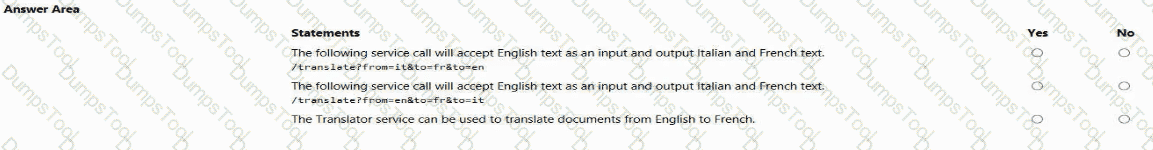

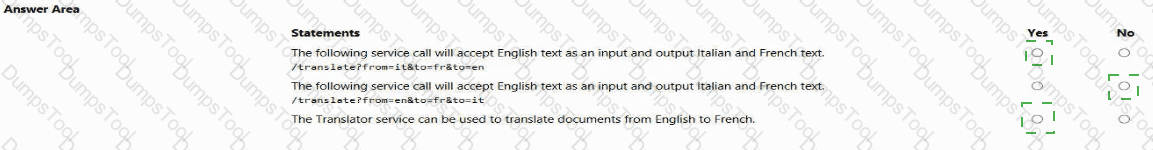

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

Yes, Yes, and No.

According to the Microsoft Azure AI Fundamentals (AI-900) official study materials and the Microsoft Learn module “Identify features of natural language processing (NLP) workloads on Azure”, the Azure Translator service is a cloud-based AI service within Azure Cognitive Services that provides real-time text translation across multiple languages.

“You can use the Translator service to translate text between languages.” – Yes.This is the core function of the Translator service. It takes text as input in one language and returns it in another using advanced neural machine translation models. This aligns with the AI-900 learning objective: “Describe the capabilities of Azure Cognitive Services for language”, which specifically names Azure Translator as the service used to perform automatic text translation. The service supports over 100 languages and dialects, offering both single-sentence and document-level translations.

“You can use the Translator service to detect the language of a given text.” – Yes.This statement is also true. The Translator service automatically detects the source language if it is not specified in the request. This feature is documented in the Azure Translator API, where the system identifies the input language before performing translation. The AI-900 exam content emphasizes this as one of the Translator service’s built-in capabilities—language detection for untagged text.

“You can use the Translator service to transcribe audible speech into text.” – No.This is not a function of Translator. Transcription (converting speech to text) is a speech AI workload, handled by the Azure Speech Service, not Translator. The Speech-to-Text capability in Azure Cognitive Services processes spoken audio input and returns the text transcription. The Translator service only works with text input, not direct audio.

Therefore, based on official AI-900 guidance, the verified configuration is:

✅ Yes – for text translation

✅ Yes – for language detection

❌ No – for speech transcription.

This aligns precisely with the AI-900 learning outcomes describing Text Translation and Language Detection as Translator capabilities, and Speech Transcription as part of the separate Speech service.

You are processing photos of runners in a race.

You need to read the numbers on the runners ' shirts to identify the runners in the photos. Which type of computer vision should you use?

image classification

optical character recognition (OCR)

object detection

facial recognition

The correct answer is B. Optical Character Recognition (OCR).

Optical Character Recognition (OCR) is a feature of Azure AI Vision that converts printed or handwritten text within images into machine-readable text. In this scenario, the goal is to read runner numbers on shirts from race photos. OCR can identify and extract these numbers, allowing them to be associated with specific participants.

Option analysis:

A. Image classification: Categorizes entire images (e.g., “runner,” “crowd”), not text.

B. Optical Character Recognition (OCR) — ✅ Correct. Extracts alphanumeric text from images.

C. Object detection: Identifies and locates objects (e.g., shoes, cars) but doesn’t read text.

D. Facial recognition: Identifies individuals by matching facial features to known identities, not by reading numbers.

Therefore, to read and extract runner numbers from photos, the correct computer vision technique is Optical Character Recognition (OCR).

You need to provide customers with the ability to query the status of orders by using phones, social media, or digital assistants.

What should you use?

Azure Al Bot Service

the Azure Al Translator service

an Azure Al Document Intelligence model

an Azure Machine Learning model

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn module “Identify Azure services for conversational AI,” the Azure AI Bot Service is specifically designed to create intelligent conversational agents (chatbots) that can interact with users across multiple communication channels, such as web chat, social media, phone calls, Microsoft Teams, and digital assistants.

In this scenario, customers need the ability to query the status of their orders through various interfaces — including voice and text platforms. Azure AI Bot Service enables this by integrating with Azure AI Language (for understanding natural language), Azure Speech (for speech-to-text and text-to-speech capabilities), and Azure Communication Services (for telephony or chat integration).

The bot can interpret user input like “Where is my order?” or “Check my delivery status,” call backend systems (such as an order database or API), and then respond appropriately to the user through the same communication channel.

Let’s analyze the incorrect options:

B. Azure AI Translator Service: Used for real-time text translation between languages; it doesn’t handle conversation logic or database queries.

C. Azure AI Document Intelligence model: Extracts data from structured and semi-structured documents (e.g., invoices, receipts), not user queries.

D. Azure Machine Learning model: Builds and deploys predictive models, but doesn’t provide conversational or multi-channel interaction capabilities.

Thus, for enabling multi-channel conversational experiences where customers can inquire about order statuses using voice, chat, or digital assistants, the most appropriate solution is Azure AI Bot Service, as outlined in Azure’s AI conversational workload documentation.

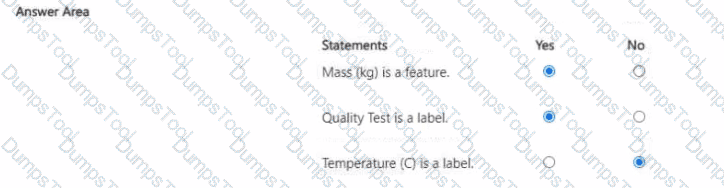

What are three stages in a transformer model? Each correct answer presents a complete solution.

NOTE: Each correct answer is worth one point.

object detection

embedding calculation

tokenization

next token prediction

anonymization

A transformer model is the foundational architecture behind many modern natural language processing systems such as GPT and BERT. It processes text data through multiple key stages. According to the Microsoft Azure AI Fundamentals (AI-900) curriculum and Microsoft Learn materials, the major stages of a transformer-based large language model are tokenization, embedding calculation, and next token prediction.

Tokenization (C) – The first step converts raw text into smaller units called tokens (words, subwords, or characters). This process allows the model to handle text in a structured numerical form rather than as raw language.

Embedding Calculation (B) – After tokenization, the tokens are mapped into high-dimensional numeric vectors, known as embeddings. These embeddings capture semantic relationships between words and phrases so that the model can understand context and meaning.

Next Token Prediction (D) – This stage is the heart of transformer operation, where the model predicts the next likely token in a sequence based on prior tokens. Repeated next-token predictions enable text generation, summarization, or translation.

Options A (object detection) and E (anonymization) are incorrect because they relate to vision and privacy workflows, not language modeling.

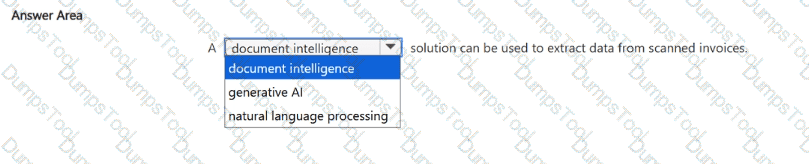

Select the answer that correctly completes the sentence.

The correct answer is Document Intelligence.

According to the Microsoft Azure AI Fundamentals (AI-900) study materials and Microsoft Learn documentation, the Azure AI Document Intelligence service (formerly known as Form Recognizer) is specifically designed to extract structured data from documents, including scanned invoices, receipts, forms, and business cards.

This service combines optical character recognition (OCR) with machine learning to analyze both the layout and semantic meaning of document content. When processing scanned invoices, Document Intelligence identifies and extracts fields such as invoice numbers, dates, totals, taxes, vendor names, and line-item details. The extracted information can then be automatically imported into business systems like accounting software or databases, eliminating manual data entry and improving operational efficiency.

Here’s why the other options are incorrect:

Generative AI: Focuses on creating new content such as text, images, or code (for example, using GPT-4 or DALL·E). It is not used for structured data extraction.

Natural Language Processing (NLP): Deals with understanding and generating human language from text-based input, not document scanning or layout interpretation.

The Document Intelligence workload excels at handling semi-structured documents where the location and format of data vary between samples. Microsoft’s prebuilt models—like Invoice, Receipt, Identity Document, and Contract—simplify extraction without requiring custom training.

In summary, if the task involves extracting data from scanned invoices, the appropriate Azure AI service is Azure AI Document Intelligence, which uses AI-powered document understanding to convert unstructured document images into structured, usable data.

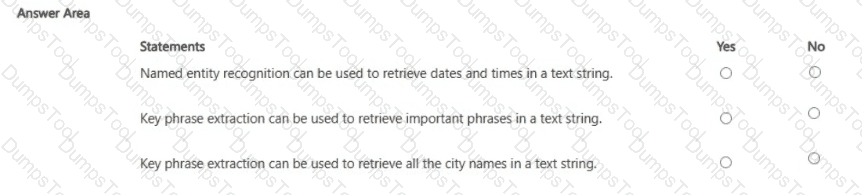

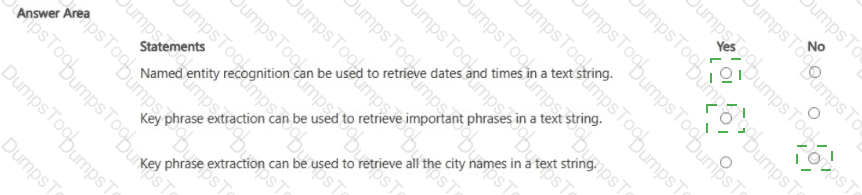

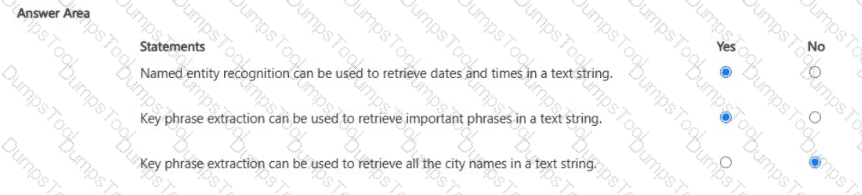

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

In Microsoft Azure AI Language Service, both Named Entity Recognition (NER) and Key Phrase Extraction are core features for text analytics. They serve distinct purposes in analyzing and structuring unstructured text data.

Named Entity Recognition (NER):NER is used to identify and categorize specific entities within text, such as people, organizations, locations, dates, times, and quantities. According to Microsoft Learn’s “Analyze text with Azure AI Language” module, NER scans text to extract these entities along with their types. Therefore, the statement “Named entity recognition can be used to retrieve dates and times in a text string” is True (Yes).

Key Phrase Extraction:This feature identifies the most important phrases or main topics in a block of text. It is useful for summarization or highlighting central ideas without classifying them into specific categories. Therefore, the statement “Key phrase extraction can be used to retrieve important phrases in a text string” is also True (Yes).

City Name Retrieval:While key phrase extraction highlights major phrases, it does not extract specific entities like cities or dates. Extracting such details requires Named Entity Recognition, which is designed to find named entities such as city names, people, or organizations. Hence, the statement “Key phrase extraction can be used to retrieve all the city names in a text string” is False (No).

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

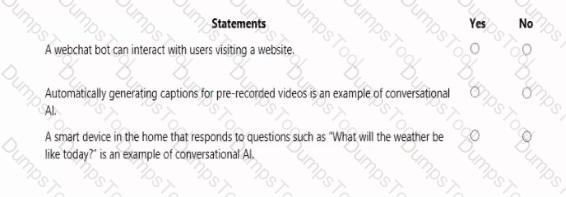

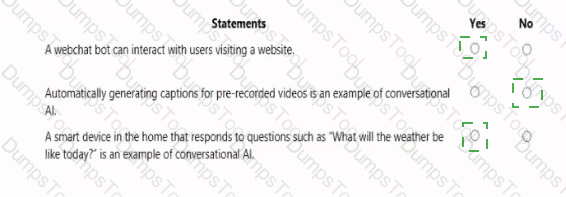

NOTE: Each correct selection is worth one point.

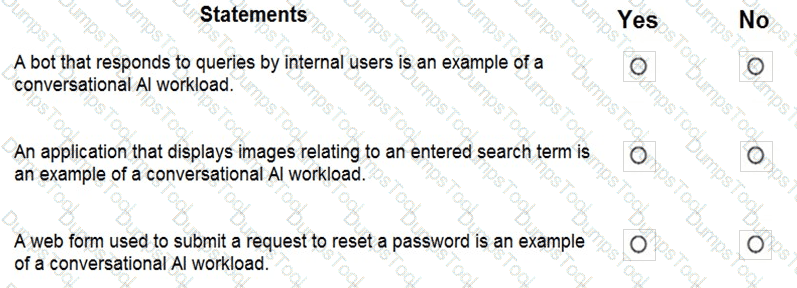

This question is derived from the Microsoft Azure AI Fundamentals (AI-900) learning module, particularly under “Describe features of conversational AI workloads on Azure.” It tests understanding of chatbot capabilities and design principles within the context of Azure Bot Service and Conversational AI.

Chatbots can support voice input – YesAccording to the AI-900 official materials, conversational AI systems such as chatbots can interact with users through text or voice. Using speech recognition services like Azure Cognitive Services Speech-to-Text, bots can interpret spoken input, and with Text-to-Speech, they can respond verbally. This enables voice-based chatbots used in virtual assistants, call centers, and customer support. Hence, voice input is fully supported by conversational AI solutions in Azure.

A separate chatbot is required for each communication channel – NoThe Azure Bot Service is designed to provide multi-channel communication from a single bot instance. A single chatbot can communicate across several channels such as Microsoft Teams, Web Chat, Slack, Facebook Messenger, and email without needing separate bots for each platform. This centralized design allows developers to create, deploy, and manage one bot while configuring multiple channel connections through the Azure portal. Therefore, the statement is false.

Chatbots manage conversation flows by using a combination of natural language and constrained option responses – YesIn Microsoft’s AI-900 training, chatbots are described as using Natural Language Processing (NLP) to understand free-form user input while also guiding interactions with predefined options such as buttons or quick replies. This hybrid approach ensures both flexibility and control, improving user experience and accuracy. Bots can interpret natural language via services like Language Understanding (LUIS) and also present structured options to guide conversations efficiently.

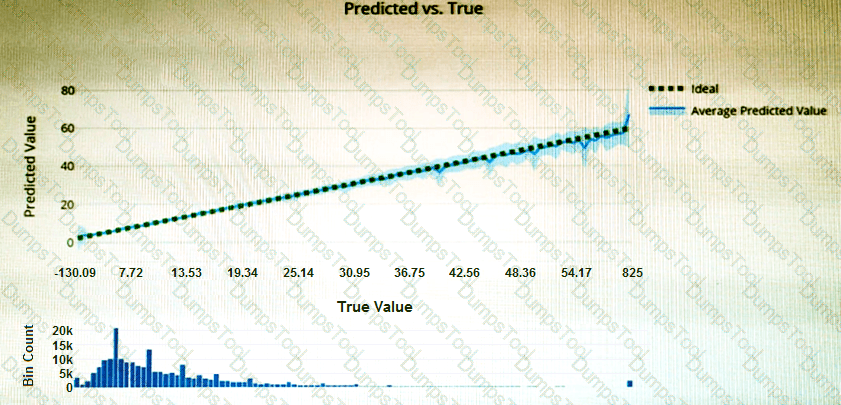

You have the Predicted vs. True chart shown in the following exhibit.

Which type of model is the chart used to evaluate?

classification

regression

clustering

What is a Predicted vs. True chart?

Predicted vs. True shows the relationship between a predicted value and its correlating true value for a regression problem. This graph can be used to measure performance of a model as the closer to the y=x line the predicted values are, the better the accuracy of a predictive model.

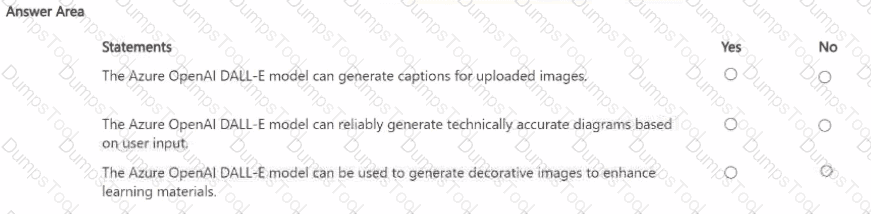

Which two actions can you perform by using the Azure OpenAI DALL-E model? Each correct answer presents a complete solution.

NOTE: Each correct answer is worth one point.

Create images.

Use optical character recognition (OCR).

Detect objects in images.

Modify images.

Generate captions for images.

The correct answers are A. Create images and D. Modify images.

The Azure OpenAI DALL-E model is a text-to-image generative AI model that can create original images and modify existing ones based on text prompts. According to Microsoft Learn and Azure OpenAI documentation, DALL-E interprets natural language descriptions to produce unique and creative visual content, making it useful for design, illustration, marketing, and educational applications.

Create images (A) – DALL-E can generate new images entirely from textual input. For example, the prompt “a futuristic city skyline at sunrise” would result in a custom-generated artwork that visually represents that description.

Modify images (D) – DALL-E also supports inpainting and outpainting, allowing users to edit or expand existing images. You can replace parts of an image (for example, changing a background or object) or add new elements consistent with the visual style of the original.

The remaining options are incorrect:

B. OCR is performed by Azure AI Vision, not DALL-E.

C. Detect objects in images is also an Azure AI Vision (Image Analysis) feature.

E. Generate captions for images is handled by Azure AI Vision, not DALL-E, since DALL-E generates—not interprets—visuals.

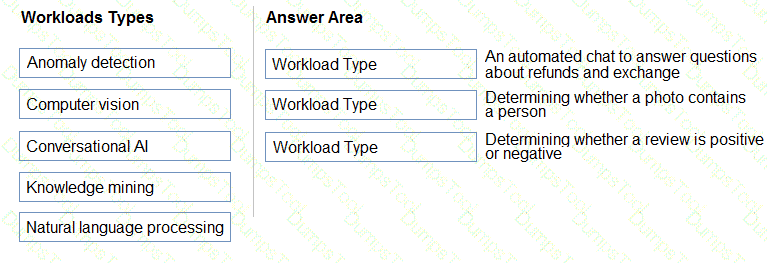

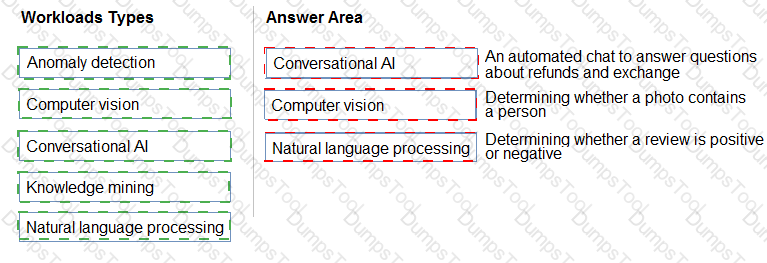

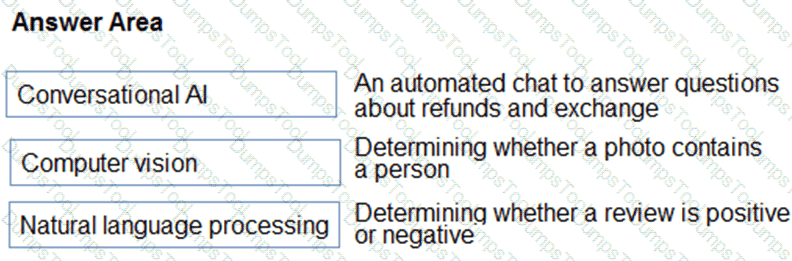

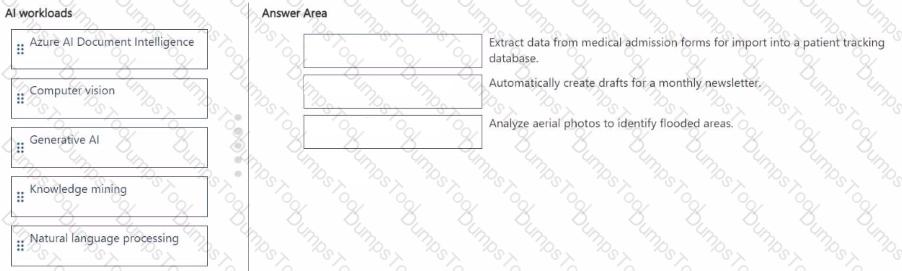

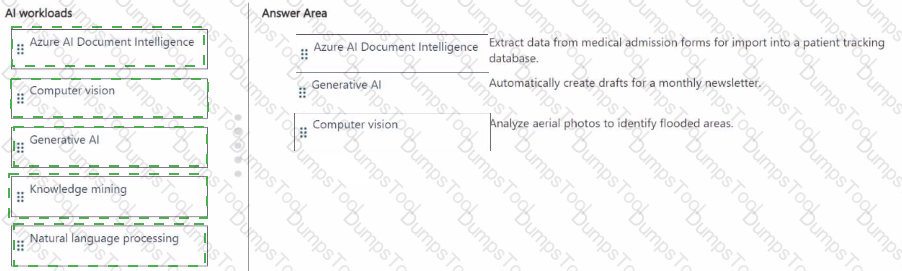

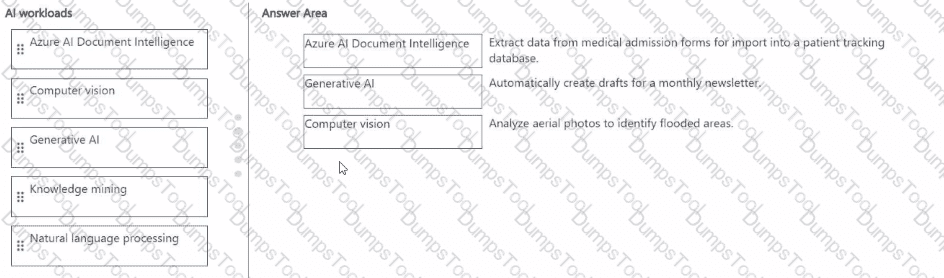

Match the types of AI workloads to the appropriate scenarios.

To answer, drag the appropriate workload type from the column on the left to its scenario on the right. Each workload type may be used once, more than once, or not at all.

NOTE: Each correct selection is worth one point.

Box 3: Natural language processing

Natural language processing (NLP) is used for tasks such as sentiment analysis, topic detection, language detection, key phrase extraction, and document categorization.

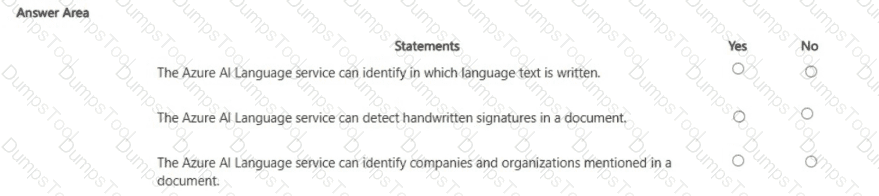

For each of The following statements, select Yes if the statement is true. Otherwise, select No.

NOTE: Each correct selection is worth one point.

The Azure AI Language service (part of Azure Cognitive Services) provides a set of natural language processing (NLP) capabilities designed to analyze and interpret text data. Its core features include language detection, key phrase extraction, sentiment analysis, and named entity recognition (NER).

Language Identification – YESAccording to the Microsoft Learn module “Analyze text with Azure AI Language,” one of the service’s built-in capabilities is language detection, which determines the language of a given text string (e.g., English, Spanish, or French). This allows applications to automatically adapt to multilingual input.

Handwritten Signature Detection – NOThe Azure AI Language service only processes text-based data; it does not analyze images or handwriting. Detecting handwritten signatures requires computer vision capabilities, specifically Azure AI Vision or Azure AI Document Intelligence, which can extract and interpret visual content from scanned documents or images.

Identifying Companies and Organizations – YESThe Named Entity Recognition (NER) feature within Azure AI Language can identify entities such as people, locations, dates, organizations, and companies mentioned in text. It tags these entities with categories, enabling structured analysis of unstructured data.

✅ Summary:

Language detection → Yes (supported by AI Language).

Handwritten signatures → No (requires Computer Vision).

Entity recognition for companies/organizations → Yes (supported by AI Language NER).

A smart device that responds to the question. " What is the stock price of Contoso, Ltd.? " is an example of which Al workload?

computer vision

anomaly detection

knowledge mining

natural language processing

The question describes a smart device that can understand and respond to a spoken or written question such as, “What is the stock price of Contoso, Ltd.?” This scenario directly maps to the Natural Language Processing (NLP) workload in Microsoft Azure AI.

According to the Microsoft AI Fundamentals (AI-900) study guide and the Microsoft Learn module “Describe features of common AI workloads,” NLP enables systems to understand, interpret, and generate human language. Azure AI Language and Azure Speech services are examples of NLP-based solutions.

In this case, the smart device performs several NLP tasks:

Speech recognition – converts spoken input into text.

Language understanding – interprets the user’s intent, i.e., retrieving the stock price of a specific company.

Response generation – formulates a meaningful answer that can be presented back as text or speech.

This process shows a full pipeline of natural language understanding (NLU) and conversational AI. It does not involve visual data (computer vision), data pattern analysis (anomaly detection), or document search (knowledge mining).

Hence, the correct AI workload is D. Natural Language Processing.

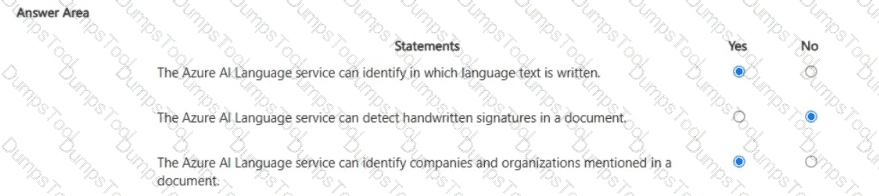

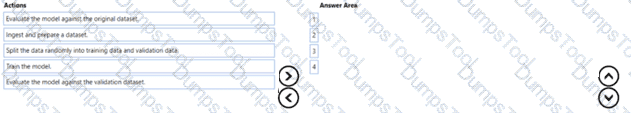

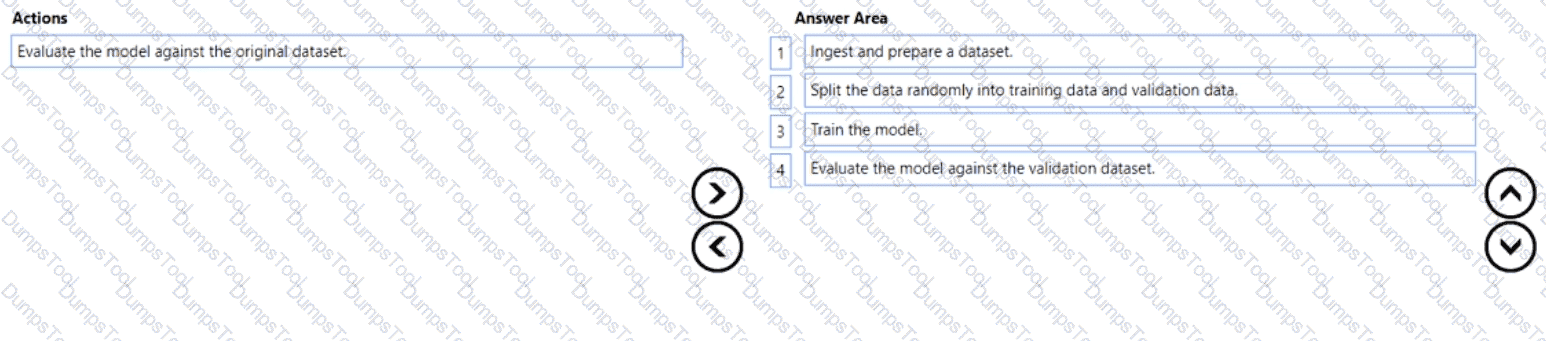

You plan to deploy an Azure Machine Learning model by using the Machine Learning designer

Which four actions should you perform in sequence? To answer, move the appropriate actions from the list of actions to the answer area and arrange them in the correct order.

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and the Microsoft Learn module “Identify features of common machine learning types”, the standard workflow for creating and deploying a machine learning model — especially within Azure Machine Learning Designer — follows a structured sequence of steps to ensure that the model is trained effectively and evaluated correctly.

Here’s the detailed breakdown of the correct order:

Import and prepare a dataset:This is always the first step in the machine learning lifecycle. The dataset is imported into Azure Machine Learning and cleaned or preprocessed. Preparation might include handling missing values, normalizing data, removing outliers, and encoding categorical variables. This ensures the dataset is ready for modeling.

Split the data randomly into training data and validation data:The dataset is then divided into two parts — the training set and the validation (or testing) set. Typically, around 70–80% of the data is used for training and 20–30% for validation. This step ensures that the model can be evaluated on unseen data later, preventing overfitting.

Train the model:During this stage, the machine learning algorithm learns patterns from the training data. Azure Machine Learning Designer provides multiple algorithms (classification, regression, clustering, etc.) that can be applied using “Train Model” components.

Evaluate the model against the validation dataset:Finally, the trained model’s performance is tested using the validation dataset. Evaluation metrics such as accuracy, precision, recall, or RMSE (depending on the model type) are calculated to assess how well the model generalizes to new data.

The incorrect option — “Evaluate the model against the original dataset” — is not used in proper ML workflows, because evaluating on the same data used for training would give misleadingly high accuracy due to overfitting.

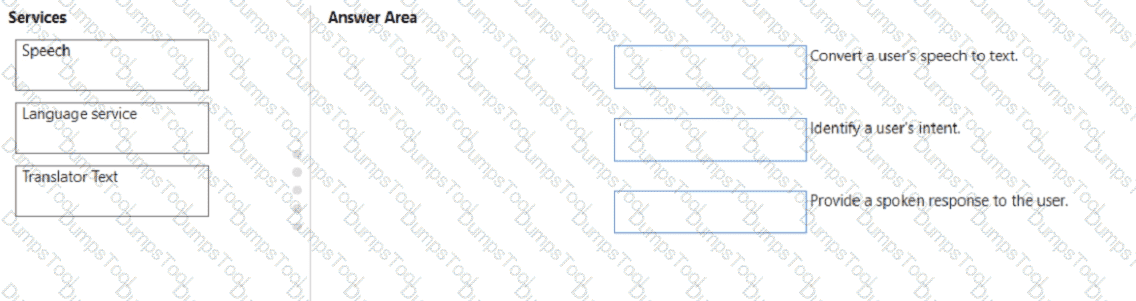

You need to build an app that will read recipe instructions aloud to support users who have reduced vision.

Which version service should you use?

Text Analytics

Translator Text

Speech

Language Understanding (LUIS)

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and the Microsoft Learn module “Identify features of speech capabilities in Azure Cognitive Services”, the Azure Speech service provides functionality for converting text to spoken words (speech synthesis) and speech to text (speech recognition).

In this scenario, the app must read recipe instructions aloud to assist users with visual impairments. This task is achieved through speech synthesis, also known as text-to-speech (TTS). The Azure Speech service uses advanced neural network models to generate natural-sounding voices in many languages and accents, making it ideal for accessibility scenarios such as screen readers, virtual assistants, and educational tools.

Microsoft Learn defines Speech service as a unified offering that includes:

Speech-to-text (speech recognition): Converts spoken words into text.

Text-to-speech (speech synthesis): Converts written text into natural-sounding audio output.

Speech translation: Translates spoken language into another language in real time.

Speaker recognition: Identifies or verifies a person based on their voice.

The other options do not fit the requirements:

A. Text Analytics – Performs text-based natural language analysis such as sentiment, key phrase extraction, and entity recognition, but it cannot produce audio output.

B. Translator Text – Translates text between languages but does not generate speech output.

D. Language Understanding (LUIS) – Interprets user intent from text or speech for conversational bots but does not read text aloud.

Therefore, based on the AI-900 curriculum and Microsoft Learn documentation, the correct service for converting recipe text to spoken audio is the Azure Speech service.

✅ Final Answer: C. Speech

Which Azure Al Language feature can be used to retrieve data, such as dates and people ' s names, from social media posts?

language detection

speech recognition

key phrase extraction

entity recognition

The Azure AI Language service provides several NLP features, including language detection, key phrase extraction, sentiment analysis, and named entity recognition (NER).

When you need to extract specific data points such as dates, names, organizations, or locations from unstructured text (for example, social media posts), the correct feature is Entity Recognition.

Entity Recognition identifies and classifies information in text into predefined categories like:

Person names (e.g., “John Smith”)

Organizations (e.g., “Contoso Ltd.”)

Dates and times (e.g., “October 22, 2025”)

Locations, events, and quantities

This capability helps transform unstructured textual data into structured data that can be analyzed or stored.

Option analysis:

A (Language detection): Determines the language of a text (e.g., English, French).

B (Speech recognition): Converts spoken audio to text; not applicable here.

C (Key phrase extraction): Identifies important phrases or topics but not specific entities like names or dates.

D (Entity recognition): Correctly extracts names, dates, and other specific data from text.

Hence, the accurate feature for this scenario is D. Entity Recognition.

You have a website that includes customer reviews.

You need to store the reviews in English and present the reviews to users in their respective language by recognizing each user’s geographical location.

Which type of natural language processing workload should you use?

translation

language modeling

key phrase extraction

speech recognition

According to the Microsoft Azure AI Fundamentals (AI-900) syllabus and Microsoft Learn module “Describe features of natural language processing (NLP) workloads on Azure,” translation is a core NLP workload that converts text from one language into another while maintaining meaning and context.

In this scenario, the website stores reviews in English and must present them in the user’s native language based on geographical location. This directly requires a translation workload, which uses Azure Cognitive Services — specifically, the Translator service — to automatically translate content dynamically for each user.

Other options explained:

B. Language modeling involves predicting the next word in a sentence or understanding linguistic patterns; it’s used in model training, not translation.

C. Key phrase extraction identifies main ideas in text, not language conversion.

D. Speech recognition converts spoken words into written text but does not perform translation or handle geographic adaptation.

Microsoft’s Translator service supports real-time text translation, multi-language detection, and context preservation, making it ideal for global websites. The AI-900 study guide emphasizes translation as one of the most common NLP workloads, enabling applications to break language barriers and enhance accessibility for diverse audiences.

Therefore, based on official Microsoft Learn material, the correct answer is:

✅ A. translation.

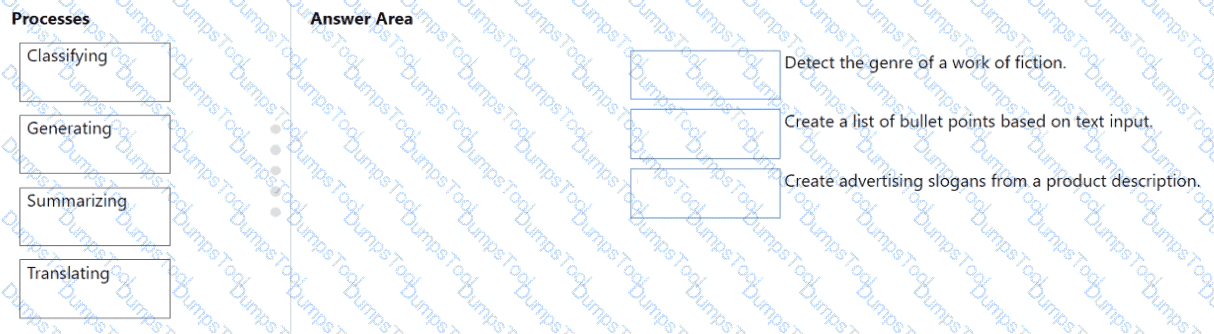

For each of the following statements, select Yes if the statement is true. Otherwise, select No.

NOTE; Each correct selection is worth one point.

According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and Microsoft Learn modules on machine learning concepts, ensuring that the accuracy of a predictive model can be proven requires data partitioning—specifically splitting the available data into training and testing datasets. This is a foundational concept in supervised machine learning.

When you split the data, typically about 70–80% of the dataset is used for training the model, while the remaining 20–30% is used for testing (or validation). The reason behind this approach is to ensure that the model’s performance metrics—such as accuracy, precision, recall, and F1-score—are evaluated on data the model has never seen before. This prevents overfitting and allows you to demonstrate that the model generalizes well to new, unseen data.

In the AI-900 Microsoft Learn content under “Describe the machine learning process”, it is explained that after cleaning and transforming the data, the next essential step is data splitting to “evaluate model performance objectively.” By keeping training and testing data separate, you can prove the reliability and accuracy of the model’s predictions, which is particularly crucial in sensitive domains like clinical or healthcare analytics, where decision transparency and validation are vital.

Option A (Train the model by using the clinical data) is incorrect because you should not train and evaluate on the same data—it would lead to biased results.

Option C (Train the model using automated ML) is incorrect because automated ML is a method for training and tuning, but it doesn’t inherently prove accuracy.

Option D (Validate the model by using the clinical data) is also incorrect if you use the same dataset for validation and training—it would not prove true accuracy.

Therefore, per Microsoft’s official AI-900 study content, the verified correct answer is B. Split the clinical data into two datasets.

You need to make the press releases of your company available in a range of languages.

Which service should you use?

Translator Text

Text Analytics

Speech

Language Understanding (LUIS)

The Translator Text service (part of Azure Cognitive Services) provides real-time text translation across multiple languages. According to Microsoft Learn’s AI-900 module on “Identify features of Natural Language Processing (NLP) workloads”, translation is one of the four main NLP tasks, alongside key phrase extraction, sentiment analysis, and language understanding.

In this scenario, the company wants to make press releases available in a range of languages, which requires converting text from one language to another while preserving meaning and tone. The Translator Text API supports more than 100 languages and can be integrated into web apps, chatbots, or content management systems for automatic multilingual publishing.

The other options perform different functions:

Text Analytics (B) extracts insights such as key phrases or sentiment but does not translate.

Speech (C) focuses on converting between speech and text, not text translation.

Language Understanding (LUIS) (D) identifies user intent but does not perform translation.

Therefore, to provide multilingual press releases, the appropriate service is A. Translator Text, which ensures accurate, fast, and scalable translation across global audiences.

Which AI service can you use to interpret the meaning of a user input such as “Call me back later?”

Translator Text

Text Analytics

Speech

Language Understanding (LUIS)

According to the Microsoft Azure AI Fundamentals (AI-900) learning content, Language Understanding Intelligent Service (LUIS) is part of Azure Cognitive Services used to interpret the meaning or intent behind a user’s input in natural language. When a user says, “Call me back later,” the system must recognize that the user intends for a call to be scheduled or delayed—this is not just about translating or analyzing text but understanding intent and relevant entities.

LUIS allows developers to train models to identify intents (such as ScheduleCall, CancelMeeting, etc.) and extract key entities (like names, times, or actions) from text inputs. It is typically integrated with conversational agents such as Azure Bot Service, enabling more natural, human-like interactions.

Other options do not fit the scenario:

Translator Text (A) translates text between languages but does not interpret meaning.

Text Analytics (B) performs sentiment analysis, key phrase extraction, and named entity recognition, but it doesn’t identify intent.

Speech (C) converts spoken language to text or text to speech but doesn’t interpret what the words mean.

Therefore, for understanding user intent such as “Call me back later,” the correct AI service is D. Language Understanding (LUIS).

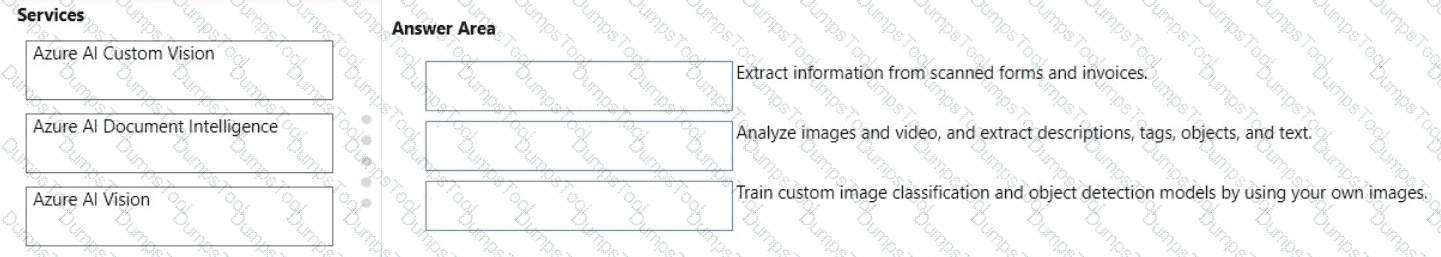

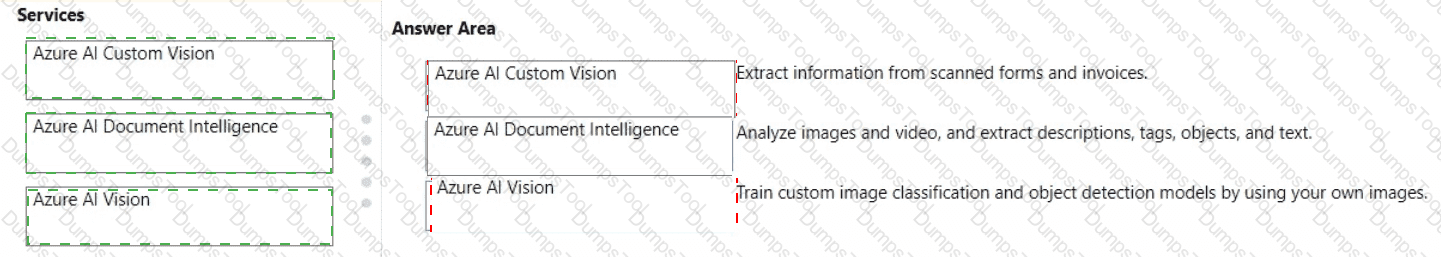

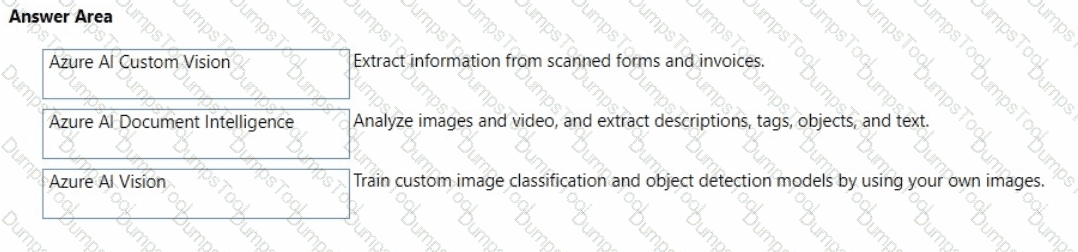

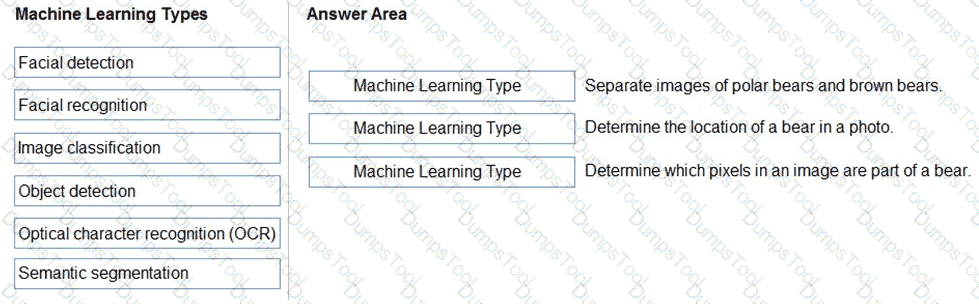

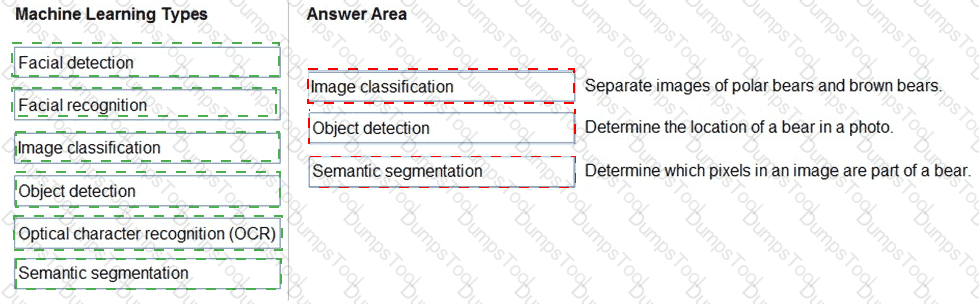

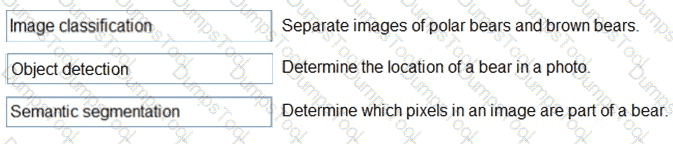

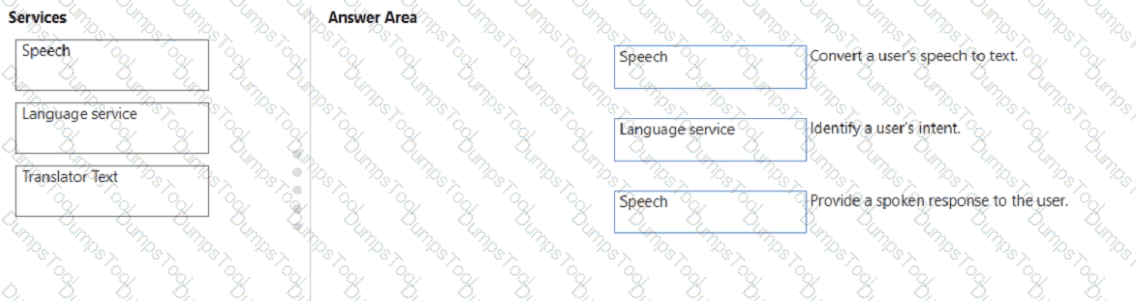

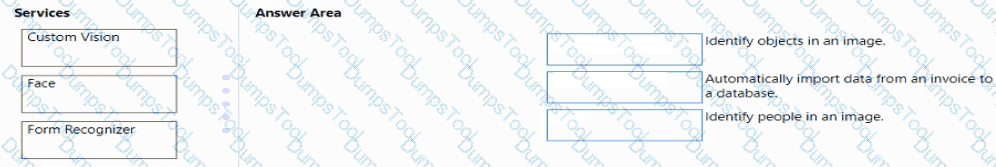

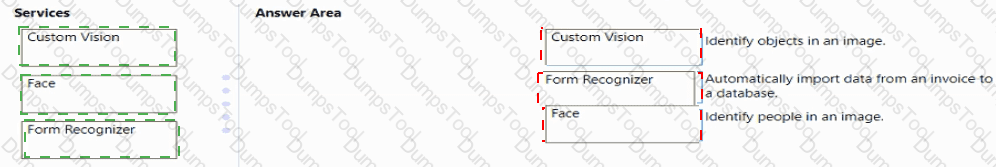

Match the computer vision service to the appropriate Al workload.

To answer, drag the appropriate service from the column on the left to its workload on the right. Each service may be used once, more than once, or not at all.

NOTE: Each correct match is worth one point.

This question evaluates understanding of the different Azure AI Computer Vision services and their distinct functionalities, as covered in the Microsoft AI-900 study guide and Microsoft Learn modules under “Describe features of common AI workloads” and “Identify Azure services for computer vision.”